Install OpenStack¶

In the previous section, we installed Juju and created a Juju controller and model. We are now going to use Juju to install OpenStack itself. There are two methods to choose from:

By individual charm. This method provides a solid understanding of how Juju works and of how OpenStack is put together. Choose this option if you have never installed OpenStack with Juju.

By charm bundle. This method provides an automated means to install OpenStack. Choose this option if you are familiar with how OpenStack is built with Juju.

The current page is devoted to method #1. See Deploying OpenStack from a bundle for method #2.

Important

Irrespective of install method, once the cloud is deployed, the following management practices related to charm versions and machine series are recommended:

The entire suite of charms used to manage the cloud should be upgraded to the latest stable charm revision before any major change is made to the cloud (e.g. migrating to new charms, upgrading cloud services, upgrading machine series). See Charm upgrades for details.

The Juju machines that comprise the cloud should all be running the same series (e.g. ‘xenial’ or ‘bionic’, but not a mix of the two). See Series upgrade for details.

Despite the length of this page, only three distinct Juju commands will be employed: juju deploy, juju add-unit, and juju add-relation. You may want to review these pertinent sections of the Juju documentation before continuing:

This page will show how to install a minimal non-HA OpenStack cloud. See OpenStack high availability for guidance on that subject.

OpenStack release¶

As the guide’s Overview section states, OpenStack Train will be deployed atop Ubuntu 18.04 LTS (Bionic) cloud nodes. In order to achieve this a “cloud archive pocket” of ‘cloud:bionic-train’ will be used during the install of each OpenStack application. Note that some applications are not part of the OpenStack project per se and therefore do not apply (exceptionally, Ceph applications do use this method). Not using a more recent OpenStack release in this way will result in a Queens deployment (i.e. Queens is in the Ubuntu package archive for Bionic).

See Perform the upgrade in the OpenStack Upgrades appendix for more details on the cloud archive pocket and how it is used when upgrading OpenStack.

Important

The chosen OpenStack release may impact the installation and configuration instructions. This guide assumes that OpenStack Train is being deployed.

Installation progress¶

There are many moving parts involved in a charmed OpenStack install. During much of the process there will be components that have not yet been satisfied, which will cause error-like messages to be displayed in the output of the juju status command. Do not be alarmed. Indeed, these are opportunities to learn about the interdependencies of the various pieces of software. Messages such as Missing relation and blocked will vanish once the appropriate applications and relations have been added and processed.

Tip

One convenient way to monitor the installation progress is to have command watch -n 5 -c juju status --color running in a separate terminal.

Deploy OpenStack¶

Assuming you have precisely followed the instructions on the Install Juju page, you should now have a Juju controller called ‘maas-controller’ and an empty Juju model called ‘openstack’. Change to that context now:

juju switch maas-controller:openstack

In the following sections, the various OpenStack components will be added to the ‘openstack’ model. Each application will be installed from the online Charm store and each will typically have configuration options specified via its own YAML file.

Note

You do not need to wait for a Juju command to complete before issuing further ones. However, it can be very instructive to see the effect one command has on the current state of the cloud.

Ceph OSD¶

The ceph-osd application is deployed to four nodes with the ceph-osd charm.

The name of the block devices backing the OSDs is dependent upon the hardware

on the nodes. Here, we’ll be using the same second drive on each cloud node:

/dev/sdb. File ceph-osd.yaml contains the configuration. If your

devices are not identical across the nodes you will need separate files (or

stipulate them on the command line):

ceph-osd:

osd-devices: /dev/sdb

source: cloud:bionic-train

To deploy the application we’ll make use of the ‘compute’ tag we placed on each of these nodes on the Install MAAS page.

juju deploy --constraints tags=compute --config ceph-osd.yaml -n 4 ceph-osd

If a message from a ceph-osd unit like “Non-pristine devices detected” appears

in the output of juju status you will need to use actions

zap-disk and add-disk that come with the ‘ceph-osd’ charm. The

zap-disk action is destructive in nature. Only use it if you want to purge

the disk of all data and signatures for use by Ceph.

Note

Since ceph-osd was deployed on four nodes and there are only four nodes available in this environment, the usage of the ‘compute’ tag is not strictly necessary.

Nova compute¶

The nova-compute application is deployed to one node with the nova-compute

charm. We’ll then scale-out the application to two other machines. File

compute.yaml contains the configuration:

nova-compute:

enable-live-migration: true

enable-resize: true

migration-auth-type: ssh

openstack-origin: cloud:bionic-train

The initial node must be targeted by machine since there are no more free Juju machines (MAAS nodes) available. This means we’re placing multiple services on our nodes. We’ve chosen machine 1:

juju deploy --to 1 --config compute.yaml nova-compute

Now scale-out to machines 2 and 3:

juju add-unit --to 2 nova-compute

juju add-unit --to 3 nova-compute

Note

The ‘nova-compute’ charm is designed to support one image format type per

application at any given time. Changing format (see charm option

libvirt-image-backend) while existing instances are using the prior

format will require manual image conversion for each instance. See bug LP

#1826888.

Swift storage¶

The swift-storage application is deployed to one node (machine 0) with the

swift-storage charm and then scaled-out to three other machines. File

swift-storage.yaml contains the configuration:

swift-storage:

block-device: sdc

overwrite: "true"

openstack-origin: cloud:bionic-train

This configuration points to block device /dev/sdc. Adjust according to

your available hardware. In a production environment, avoid using a loopback

device.

Here are the four deploy commands for the four machines:

juju deploy --to 0 --config swift-storage.yaml swift-storage

juju add-unit --to 1 swift-storage

juju add-unit --to 2 swift-storage

juju add-unit --to 3 swift-storage

Neutron networking¶

Neutron networking is implemented with three applications:

neutron-gateway

neutron-api

neutron-openvswitch

File neutron.yaml contains the configuration for two of them:

neutron-gateway:

data-port: br-ex:eth1

bridge-mappings: physnet1:br-ex

openstack-origin: cloud:bionic-train

neutron-api:

neutron-security-groups: true

flat-network-providers: physnet1

openstack-origin: cloud:bionic-train

Note

The neutron-openvswitch charm does not support option openstack-origin

due to it being a subordinate charm to the nova-compute charm, which does

support it.

The data-port setting refers to a network interface that Neutron Gateway

will bind to. In the above example it is ‘eth1’ and it should be an unused

interface. In MAAS this interface must be given an IP mode of ‘Unconfigured’

(see Post-commission configuration in the MAAS documentation). Set all four

nodes in this way to ensure that any node is able to accommodate Neutron

Gateway.

The flat-network-providers setting enables the Neutron flat network

provider used in this example scenario and gives it the name of ‘physnet1’. The

flat network provider and its name will be referenced when we Set up

public networking on the next page.

The bridge-mappings setting maps the data-port interface to the flat

network provider.

The neutron-gateway application will be deployed directly on machine 0:

juju deploy --to 0 --config neutron.yaml neutron-gateway

The neutron-api application will be deployed as a container on machine 1:

juju deploy --to lxd:1 --config neutron.yaml neutron-api

The neutron-openvswitch application will be deployed by means of a subordinate charm (it will be installed on a machine once its relation is added):

juju deploy neutron-openvswitch

Three relations need to be added:

juju add-relation neutron-api:neutron-plugin-api neutron-gateway:neutron-plugin-api

juju add-relation neutron-api:neutron-plugin-api neutron-openvswitch:neutron-plugin-api

juju add-relation neutron-openvswitch:neutron-plugin nova-compute:neutron-plugin

Caution

Co-locating units of neutron-openvswitch and neutron-gateway will cause APT package incompatibility between the two charms on the underlying host. The result is that packages for these services will be removed: neutron-metadata-agent, neutron-dhcp-agent, and neutron-l3-agent.

The alternative is to run the neutron-gateway unit on a LXD container or on a different host entirely. Another option is to run neutron-openvswitch in DVR mode.

Recall that neutron-openvswitch is a subordinate charm; its host is determined via a relation between it and a principle charm (e.g. nova-compute).

Percona cluster¶

The Percona XtraDB cluster is the OpenStack database of choice. The

percona-cluster application is deployed as a single LXD container on machine 0

with the percona-cluster charm. File mysql.yaml contains the

configuration:

mysql:

max-connections: 20000

To deploy Percona while giving it an application name of ‘mysql’:

juju deploy --to lxd:0 --config mysql.yaml percona-cluster mysql

Only a single relation is needed:

juju add-relation neutron-api:shared-db mysql:shared-db

Keystone¶

The keystone application is deployed as a single LXD container on machine 3.

File keystone.yaml contains the configuration:

keystone:

openstack-origin: cloud:bionic-train

To deploy:

juju deploy --to lxd:3 --config keystone.yaml keystone

Then add these two relations:

juju add-relation keystone:shared-db mysql:shared-db

juju add-relation keystone:identity-service neutron-api:identity-service

RabbitMQ¶

The rabbitmq-server application is deployed as a single LXD container on machine 0 with the rabbitmq-server charm. No additional configuration is required. To deploy:

juju deploy --to lxd:0 rabbitmq-server

Four relations are needed:

juju add-relation rabbitmq-server:amqp neutron-api:amqp

juju add-relation rabbitmq-server:amqp neutron-openvswitch:amqp

juju add-relation rabbitmq-server:amqp nova-compute:amqp

juju add-relation rabbitmq-server:amqp neutron-gateway:amqp

Nova cloud controller¶

The nova-cloud-controller application, which includes nova-scheduler, nova-api,

and nova-conductor services, is deployed as a single LXD container on machine 2

with the nova-cloud-controller charm. File controller.yaml contains the

configuration:

nova-cloud-controller:

network-manager: Neutron

openstack-origin: cloud:bionic-train

To deploy:

juju deploy --to lxd:2 --config controller.yaml nova-cloud-controller

Relations need to be added for six applications:

juju add-relation nova-cloud-controller:shared-db mysql:shared-db

juju add-relation nova-cloud-controller:identity-service keystone:identity-service

juju add-relation nova-cloud-controller:amqp rabbitmq-server:amqp

juju add-relation nova-cloud-controller:quantum-network-service neutron-gateway:quantum-network-service

juju add-relation nova-cloud-controller:neutron-api neutron-api:neutron-api

juju add-relation nova-cloud-controller:cloud-compute nova-compute:cloud-compute

Placement¶

The placement application is deployed as a single LXD container on machine 2

with the placement charm. File placement.yaml contains the

configuration:

placement:

openstack-origin: cloud:bionic-train

To deploy:

juju deploy --to lxd:2 --config placement.yaml placement

Relations need to be added for three applications:

juju add-relation placement:shared-db mysql:shared-db

juju add-relation placement:identity-service keystone:identity-service

juju add-relation placement:placement nova-cloud-controller:placement

OpenStack dashboard¶

The openstack-dashboard application (Horizon) is deployed as a single LXD

container on machine 3 with the openstack-dashboard charm. File

dashboard.yaml contains the configuration:

openstack-dashboard:

openstack-origin: cloud:bionic-train

To deploy:

juju deploy --to lxd:3 --config dashboard.yaml openstack-dashboard

A single relation is required:

juju add-relation openstack-dashboard:identity-service keystone:identity-service

Glance¶

The glance application is deployed as a single container on machine 2 with the

glance charm. File glance.yaml contains the configuration:

glance:

openstack-origin: cloud:bionic-train

To deploy:

juju deploy --to lxd:2 --config glance.yaml glance

Five relations are needed:

juju add-relation glance:image-service nova-cloud-controller:image-service

juju add-relation glance:image-service nova-compute:image-service

juju add-relation glance:shared-db mysql:shared-db

juju add-relation glance:identity-service keystone:identity-service

juju add-relation glance:amqp rabbitmq-server:amqp

Ceph monitor¶

The ceph-mon application is deployed as a container on machines 1, 2, and 3

with the ceph-mon charm. File ceph-mon.yaml contains the configuration:

ceph-mon:

source: cloud:bionic-train

To deploy:

juju deploy --to lxd:1 --config ceph-mon.yaml ceph-mon

juju add-unit --to lxd:2 ceph-mon

juju add-unit --to lxd:3 ceph-mon

Three relations are needed:

juju add-relation ceph-mon:osd ceph-osd:mon

juju add-relation ceph-mon:client nova-compute:ceph

juju add-relation ceph-mon:client glance:ceph

The last relation makes Ceph the backend for Glance.

Cinder¶

The cinder application is deployed to a container on machine 1 with the

cinder charm. File cinder.yaml contains the configuration:

cinder:

glance-api-version: 2

block-device: None

openstack-origin: cloud:bionic-train

To deploy:

juju deploy --to lxd:1 --config cinder.yaml cinder

Relations need to be added for five applications:

juju add-relation cinder:cinder-volume-service nova-cloud-controller:cinder-volume-service

juju add-relation cinder:shared-db mysql:shared-db

juju add-relation cinder:identity-service keystone:identity-service

juju add-relation cinder:amqp rabbitmq-server:amqp

juju add-relation cinder:image-service glance:image-service

In addition, like Glance, Cinder will use Ceph as its backend. This will be implemented via the cinder-ceph subordinate charm:

juju deploy cinder-ceph

A relation is needed for both Cinder and Ceph:

juju add-relation cinder-ceph:storage-backend cinder:storage-backend

juju add-relation cinder-ceph:ceph ceph-mon:client

Swift proxy¶

The swift-proxy application is deployed to a container on machine 0 with the

swift-proxy charm. File swift-proxy.yaml contains the configuration:

swift-proxy:

zone-assignment: auto

swift-hash: "<uuid>"

Swift proxy needs to be supplied with a unique identifier (UUID). Generate one

with the uuid -v 4 command (you may need to first install the

uuid deb package) and insert it into the file.

To deploy:

juju deploy --to lxd:0 --config swift-proxy.yaml swift-proxy

Two relations are needed:

juju add-relation swift-proxy:swift-storage swift-storage:swift-storage

juju add-relation swift-proxy:identity-service keystone:identity-service

Final results and dashboard access¶

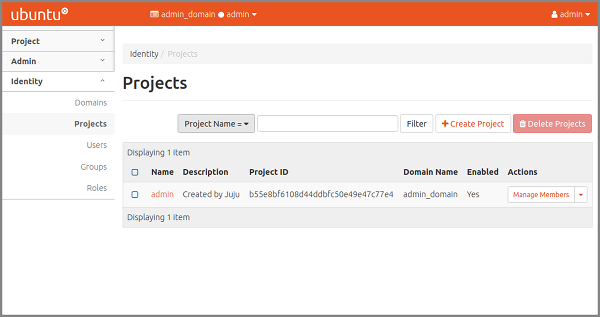

Once all the applications have been deployed and the relations between them have been added we need to wait for the output of juju status to settle. The final results should be devoid of any error-like messages. If your terminal supports colours then you should see only green (not amber nor red) . Example (monochrome) output for a successful cloud deployment is given here.

One milestone in the deployment of OpenStack is the first login to the Horizon dashboard. You will need its IP address and the admin password.

Obtain the address in this way:

juju status --format=yaml openstack-dashboard | grep public-address | awk '{print $2}'

The password is queried from Keystone:

juju run --unit keystone/0 leader-get admin_passwd

In this example, the address is ‘10.0.0.14’ and the password is ‘kohy6shoh3diWav5’.

The dashboard URL then becomes:

http://10.0.0.14/horizon

And the credentials are:

Once logged in you should see something like this:

Next steps¶

You have successfully deployed OpenStack using both Juju and MAAS. The next step is to render the cloud functional for users. This will involve setting up networks, images, and a user environment.