インスタンスのセキュリティサービス¶

インスタンスへのエントロピー¶

We consider entropy to refer to the quality and source of random data that is available to an instance. Cryptographic technologies typically rely heavily on randomness, requiring a high quality pool of entropy to draw from. It is typically hard for a virtual machine to get enough entropy to support these operations, which is referred to as entropy starvation. Entropy starvation can manifest in instances as something seemingly unrelated. For example, slow boot time may be caused by the instance waiting for ssh key generation. Entropy starvation may also motivate users to employ poor quality entropy sources from within the instance, making applications running in the cloud less secure overall.

これらの課題は、クラウドアーキテクトが高品質のエントロピーをクラウドインスタンスに提供することで対応できます。例えば、クラウド内にインスタンス用に適量なハードウェア乱数生成器(HRNG)があれば解決できます(適量はドメインによって異なる)。一般的なハードウェア乱数生成器なら通常運用されている50-100台のコンピュートノード分のエントロピーを生成することが可能です。高帯域ハードウェア乱数生成器(Intel Ivy Bridgeや最新プロセッサなどと提供されるRdRand instructionなど)はさらに多くのノードに対応できます。エントロピーの量が十分かどうかを判断するためには、クラウド上で運用するアプリケーションの要求を理解している必要があります。

The Virtio RNG is a random number generator that uses

/dev/random as the source of entropy by default, however can be

configured to use a hardware RNG or a tool such as the entropy

gathering daemon (EGD) to provide

a way to fairly and securely distribute entropy through a

distributed system. The Virtio RNG is enabled using the hw_rng

property of the metadata used to create the instance.

ノードへのインスタンスのスケジューリング¶

インスタンスを生成する前に、イメージのインスタンス化のためのホストを選択する必要があります。この選択は nova-scheduler によって行われ、さらにコンピュートとボリューム要求の伝達方法も決定します。

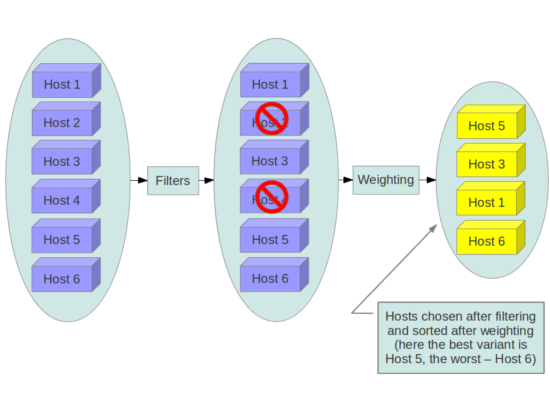

The FilterScheduler is the default scheduler for OpenStack

Compute, although other schedulers exist (see the section Scheduling

in the OpenStack Configuration Reference

). This works in collaboration with 'filter hints' to decide where an

instance should be started. This process of host selection allows

administrators to fulfill many different security and compliance

requirements. Depending on the cloud deployment type for example, one

could choose to have tenant instances reside on the same hosts whenever

possible if data isolation was a primary concern. Conversely one could

attempt to have instances for a tenant reside on as many different hosts

as possible for availability or fault tolerance reasons.

フィルタースケジューラーは、4 つのメインカテゴリーに分かれます。

- リソースベースのフィルター

これらのフィルターは、ハイパーバイザーホストの使用状況に基づきインスタンスを作成します。メモリー、I/O、CPU の使用状況などの、空きまたは使用中のプロパティーを引き金にできます。

- イメージベースのフィルター

これは、仮想マシンのオペレーティングシステムや使用されるイメージの種類など、使用されるイメージに基づいて、インスタンスの作成を権限委譲します。

- 環境ベースのフィルター

This filter will create an instance based on external details such as in a specific IP range, across availability zones, or on the same host as another instance.

- カスタムクライテリア

This filter will delegate instance creation based on user or administrator provided criteria such as trusts or metadata parsing.

Multiple filters can be applied at once, such as the

ServerGroupAffinity filter to ensure an instance is created on a

member of a specific set of hosts and ServerGroupAntiAffinity

filter to ensure that same instance is not created on another specific

set of hosts. These filters should be analyzed carefully to ensure

they do not conflict with each other and result in rules that prevent

the creation of instances.

GroupAffinity フィルターと GroupAntiAffinity フィルターは競合します。同時に有効化すべきではありません。

The DiskFilter filter is capable of oversubscribing disk space.

While not normally an issue, this can be a concern on storage devices

that are thinly provisioned, and this filter should be used with

well-tested quotas applied.

We recommend you disable filters that parse things that are provided by users or are able to be manipulated such as metadata.

信頼されたイメージ¶

In a cloud environment, users work with either pre-installed images or images they upload themselves. In both cases, users should be able to ensure the image they are utilizing has not been tampered with. The ability to verify images is a fundamental imperative for security. A chain of trust is needed from the source of the image to the destination where it's used. This can be accomplished by signing images obtained from trusted sources and by verifying the signature prior to use. Various ways to obtain and create verified images will be discussed below, followed by a description of the image signature verification feature.

イメージ作成プロセス¶

OpenStackが提供するドキュメントではイメージの作成と Image service へのアップロード方法について説明しています。ただし、オペレーティングシステムのインストールや強化のための設定方法やプロセスに関しては利用者が既に知識を持っていると想定しています。参考として、OpenStack の中でイメージがセキュアに転送されたかどうかを確認するため情報を下記に説明します。また、イメージの採取には様々な方法があり、それぞれにおいてイメージの出所を検証するための独自の手順があります。

最初の選択肢は、信頼された提供元からブートメディアを入手することです。

$ mkdir -p /tmp/download_directorycd /tmp/download_directory

$ wget http://mirror.anl.gov/pub/ubuntu-iso/CDs/precise/ubuntu-12.04.2-server-amd64.iso

$ wget http://mirror.anl.gov/pub/ubuntu-iso/CDs/precise/SHA256SUMS

$ wget http://mirror.anl.gov/pub/ubuntu-iso/CDs/precise/SHA256SUMS.gpg

$ gpg --keyserver hkp://keyserver.ubuntu.com --recv-keys 0xFBB75451

$ gpg --verify SHA256SUMS.gpg SHA256SUMSsha256sum -c SHA256SUMS 2>&1 | grep OK

The second option is to use the OpenStack Virtual Machine Image Guide. In this case, you will want to follow your organizations OS hardening guidelines or those provided by a trusted third-party such as the Linux STIGs.

最後の手段として説明するのはイメージの自動生成機構の使用です。次の例では、Oz image builderを採用しています。OpenStackコミュニティでは、disk-image-builderというさらに新しいツールが公開されています。本ツールはセキュリティ観点において未検証です。

OzでNIST 800-53 セクション AC-19(d) の実装を手助けするRHEL 6 CCE-26976-1の例

<template>

<name>centos64</name>

<os>

<name>RHEL-6</name>

<version>4</version>

<arch>x86_64</arch>

<install type='iso'>

<iso>http://trusted_local_iso_mirror/isos/x86_64/RHEL-6.4-x86_64-bin-DVD1.iso</iso>

</install>

<rootpw>CHANGE THIS TO YOUR ROOT PASSWORD</rootpw>

</os>

<description>RHEL 6.4 x86_64</description>

<repositories>

<repository name='epel-6'>

<url>http://download.fedoraproject.org/pub/epel/6/$basearch</url>

<signed>no</signed>

</repository>

</repositories>

<packages>

<package name='epel-release'/>

<package name='cloud-utils'/>

<package name='cloud-init'/>

</packages>

<commands>

<command name='update'>

yum update

yum clean all

rm -rf /var/log/yum

sed -i '/^HWADDR/d' /etc/sysconfig/network-scripts/ifcfg-eth0

echo -n > /etc/udev/rules.d/70-persistent-net.rules

echo -n > /lib/udev/rules.d/75-persistent-net-generator.rules

chkconfig --level 0123456 autofs off

service autofs stop

</command>

</commands>

</template>

It is recommended to avoid the manual image building process as it is complex and prone to error. Additionally, using an automated system like Oz for image building or a configuration management utility like Ansible or Puppet for post-boot image hardening gives you the ability to produce a consistent image as well as track compliance of your base image to its respective hardening guidelines over time.

If subscribing to a public cloud service, you should check with the cloud provider for an outline of the process used to produce their default images. If the provider allows you to upload your own images, you will want to ensure that you are able to verify that your image was not modified before using it to create an instance. To do this, refer to the following section on Image Signature Verification, or the following paragraph if signatures cannot be used.

イメージは Image サービスからノードの Compute サービスへ供給されます。この転送はTLSによって保護されている必要があります。イメージがノードに転送されたら、一般的なchecksumで検証され、起動するインスタンスのサイズに合わせてディスクが拡張します。以降、このノードで同じサイズの同一イメージを起動する場合はこの拡張されたイメージから起動されます。拡張されたイメージはデフォルトで起動前に再検証されないため、改ざんの可能性があります。これでは作成されたイメージのファイルの手動確認以外に確認方法がありません。

イメージシグネチャー検証¶

Several features related to image signing are now available in OpenStack. As of the Mitaka release, the Image service can verify these signed images, and, to provide a full chain of trust, the Compute service has the option to perform image signature verification prior to image boot. Successful signature validation before image boot ensures the signed image hasn't changed. With this feature enabled, unauthorized modification of images (e.g., modifying the image to include malware or rootkits) can be detected.

Administrators can enable instance signature verification by setting

the verify_glance_signatures flag to True in the

/etc/nova/nova.conf file. When enabled, the Compute service

automatically validates the signed instance when it is retrieved from

the Image service. If this verification fails, the boot won't occur.

The OpenStack Operations Guide provides guidance on how to create and

upload a signed image, and how to use this feature. For more

information, see Adding Signed Images

in the Operations Guide.

インスタンスのマイグレーション¶

OpenStackと下層の仮想レイヤーによってOpenStackノード間のイメージのライブマイグレーションを実現しています。これにより、インスタンスのダウンタイムなくOpenStackコンピュートノードのシームレスなローリングアップデートが可能です。ただし、ライブマイグレーションにはそれなりのリスクが伴うことを注意する必要があります。関連するリスクを理解するために、以下にライブマイグレーションの際のおおまかな流れを紹介しています。

目的先ホストでインスタンスを起動

メモリを転送

テストの停止およびディスクの同期

転送状態となる

ゲストを起動

ライブマイグレーションのリスク¶

ライブマイグレーションのステージによっては、インスタンスのランタイムメモリやディスクの今テンスが平文でネットワーク上転送されます。そのため、ライブマイグレーション中には対処が必要なリスクがあります。次は一部のリスクの詳細を列挙しています。

Denial of Service (DoS): マイグレーションプロセス中に何かが失敗した場合、インスタンスを失う可能性があります。

データの公開: メモリやディスクの転送は安全に行う必要があります。

データの操作: メモリやディスクの転送がセキュアに処理されなければ、攻撃者がマイグレーション中にユーザーデータを操作できる可能性があります。

コードの挿入: メモリやディスクの転送が安全ではない場合、マイグレーション中に攻撃者によってディスクやメモリ上の実行ファイルが操作される可能性があります。

ライブマイグレーションのリスクの軽減¶

ライブマイグレーションに関連するリスクを軽減するためには様々な手法があります。次のリストで詳しく説明します。

ライブマイグレーションの無効化

マイグレーションネットワークの分離

ライブマイグレーションの暗号化

ライブマイグレーションの無効化¶

At this time, live migration is enabled in OpenStack

by default. Live migrations can be disabled by adding the

following lines to the nova policy.json file:

{

"compute_extension:admin_actions:migrate": "!",

"compute_extension:admin_actions:migrateLive": "!",

}

マイグレーションネットワーク¶

一般的には、ライブマイグレーションで発生するトラフィックは管理セキュリティドメインに制限するべきです。セキュリティ境界と脅威 を参照してください。平文であり、稼働中のインスタンスのディスクとメモリを転送するということを踏まえると、安全性を確保するためにはライブマイグレーションのトラフィックを専用のネットワークに分離することを推奨します。専用ネットワークにトラフィックを分離することで、露出の危険性を下げることができます。

ライブマイグレーションの暗号化¶

If there is a sufficient business case for keeping live migration enabled, then libvirtd can provide encrypted tunnels for the live migrations. However, this feature is not currently exposed in either the OpenStack Dashboard or nova-client commands, and can only be accessed through manual configuration of libvirtd. The live migration process then changes to the following high-level steps:

インスタンスのデータが、ハイパーバイザーから libvirtd にコピーされます。

宛先ホストと送信先ホストの libvirtd プロセス間で暗号化トンネルが作成されます。

Destination libvirtd host copies the instances back to an underlying hypervisor.

モニタリング、アラート、レポート¶

As an OpenStack virtual machine is a server image able to be replicated across hosts, best practice in logging applies similarly between physical and virtual hosts. Operating system-level and application-level events should be logged, including access events to hosts and data, user additions and removals, changes in privilege, and others as dictated by the environment. Ideally, you can configure these logs to export to a log aggregator that collects log events, correlates them for analysis, and stores them for reference or further action. One common tool to do this is an ELK stack, or Elasticsearch, Logstash, and Kibana.

These logs should be reviewed at a regular cadence such as a live view by a network operations center (NOC), or if the environment is not large enough to necessitate a NOC, then logs should undergo a regular log review process.

Many times interesting events trigger an alert which is sent to a responder for action. Frequently this alert takes the form of an email with the messages of interest. An interesting event could be a significant failure, or known health indicator of a pending failure. Two common utilities for managing alerts are Nagios and Zabbix.

更新および¶

A hypervisor runs independent virtual machines. This hypervisor can run in an operating system or directly on the hardware (called baremetal). Updates to the hypervisor are not propagated down to the virtual machines. For example, if a deployment is using XenServer and has a set of Debian virtual machines, an update to XenServer will not update anything running on the Debian virtual machines.

Therefore, we recommend that clear ownership of virtual machines be assigned, and that those owners be responsible for the hardening, deployment, and continued functionality of the virtual machines. We also recommend that updates be deployed on a regular schedule. These patches should be tested in an environment as closely resembling production as possible to ensure both stability and resolution of the issue behind the patch.

ファイアウォールおよび他のホストベースのセキュリティ制御¶

Most common operating systems include host-based firewalls for additional security. While we recommend that virtual machines run as few applications as possible (to the point of being single-purpose instances, if possible), all applications running on a virtual machine should be profiled to determine what system resources the application needs access to, the lowest level of privilege required for it to run, and what the expected network traffic is that will be going into and coming from the virtual machine. This expected traffic should be added to the host-based firewall as allowed traffic (or whitelisted), along with any necessary logging and management communication such as SSH or RDP. All other traffic should be explicitly denied in the firewall configuration.

On Linux virtual machines, the application profile above can be used in conjunction with a tool like audit2allow to build an SELinux policy that will further protect sensitive system information on most Linux distributions. SELinux uses a combination of users, policies and security contexts to compartmentalize the resources needed for an application to run, and segmenting it from other system resources that are not needed.

OpenStack provides security groups for both hosts and the network to add defense in depth to the virtual machines in a given project. These are similar to host-based firewalls as they allow or deny incoming traffic based on port, protocol, and address, however security group rules are applied to incoming traffic only, while host-based firewall rules are able to be applied to both incoming and outgoing traffic. It is also possible for host and network security group rules to conflict and deny legitimate traffic. We recommend ensuring that security groups are configured correctly for the networking being used. See セキュリティグループ in this guide for more detail.