[ English | Indonesia | русский ]

Сетевая архитектура контейнеров¶

OpenStack-Ansible развертывает контейнеры Linux (LXC) и использует мосты на основе Linux или Open vSwitch между контейнером и интерфейсами хоста, чтобы гарантировать, что весь трафик из контейнеров проходит через несколько интерфейсов хоста. Все службы в этой модели развертывания используют уникальный IP-адрес.

В этом приложении описывается, как подключены интерфейсы и как проходит трафик.

Дополнительную информацию о том, как служба OpenStack Networking (Neutron) использует интерфейсы для трафика инстансов, см. в Руководстве по сетевому взаимодействию OpenStack.

Для деталей по настройке сетей для вашего окружения, см. Справочник настроек openstack_user_config.

Интерфейсы физических хостов¶

В типичном рабочем окружении физические сетевые интерфейсы объединяются в агрегированные пары для лучшей избыточности и пропускной способности. Избегайте использования двух портов на одной и той же многопортовой сетевой карте для одного агрегированного интерфейса, поскольку отказ сетевой карты влияет на оба физических сетевых интерфейса, используемых агрегацией. Отдельные (агрегированные) интерфейсы также являются поддерживаемой конфигурацией, но потребуют использования субинтерфейсов VLAN.

Мосты/коммутаторы Linux¶

Сочетание контейнеров и гибких вариантов развертывания требует реализации расширенных сетевых функций Linux, таких как мосты, коммутаторы и пространства имен.

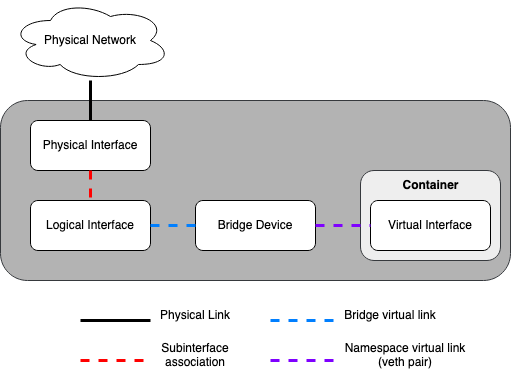

Мосты обеспечивают связь L2 (аналогично физическим коммутаторам) между физическими, логическими и виртуальными сетевыми интерфейсами в хосте. После создания моста/коммутатора сетевые интерфейсы виртуально подключаются к нему.

OpenStack-Ansible использует мосты Linux для подключений плоскости управления (control plane) к контейнерам LXC и может использовать мосты Linux или мосты на основе Open vSwitch для подключений плоскости данных (data plane), которые соединяют инстансы виртуальных машин с физической сетевой инфраструктурой.

Сетевые пространства имен предоставляют логически отдельные среды уровня 3 (похожие на VRF) в хосте. Пространства имен используют виртуальные интерфейсы для соединения с другими пространствами имен, включая пространство имен хоста. Эти интерфейсы, часто называемые парами

veth, виртуально подключаются между пространствами имен, подобно коммутационным кабелям, соединяющим физические устройства, такие как коммутаторы и маршрутизаторы.Каждый контейнер имеет пространство имен, которое соединяется с пространством имен хоста одной или более

vethпарой. Система генерирует случайные имена для `` veth`` пар, если не задано иначе.

Следующий рисунок отображает как сетевые интерфейсы контейнера соединены с мостами хоста и физическими сетевыми интерфейсами:

Сетевые схемы¶

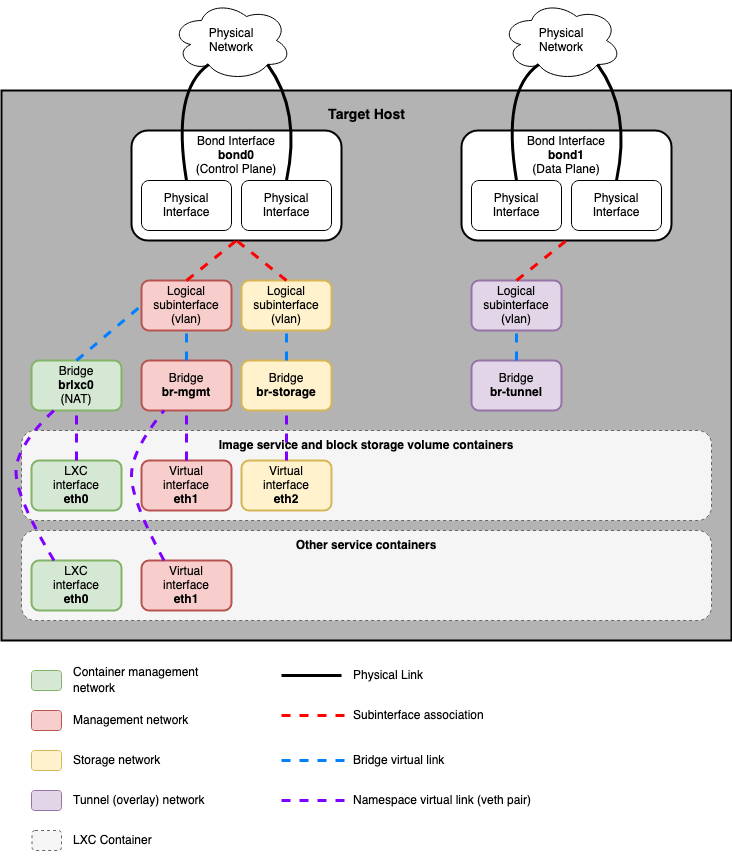

Хосты с сервисами, запущенными в контейнерах¶

Следующая схема показывает, как все интерфейсы и мосты взаимосвязаны для предоставления соединения для развертывания OpenStack:

Мост lxcbr0 настраивается автоматически и обеспечивает подключение контейнеров (через eth0) к внешнему миру благодаря dnsmasq (dhcp/dns) + NAT.

Примечание

Если вам требуется дополнительная сетевая настройка для интерфейсов контейнера (как например изменение маршрутов на eth1 для управляющей сети), пожалуйста, адаптируйте свой файл openstack_user_config.yml. См. Справочник настроек openstack_user_config для деталей.

Трафик Neutron¶

Общие стандартные драйверы, включая ML2/OVS и ML2/OVN, а также их соответствующие агенты, отвечают за управление виртуальной сетевой инфраструктурой на каждом узле. OpenStack-Ansible называет трафик Neutron трафиком «плоскости данных» (data plane) и может состоять из плоских, VLAN или оверлейных технологий, таких как VXLAN и Geneve.

Агенты Neutron могут быть развернуты на различных хостах, но обычно ограничиваются выделенными сетевыми хостами или инфраструктурными хостами (узлами контроллеров). Агенты Neutron развертываются внутри ОС, а не внутри контейнера LXC. Обычно Neutron требует от оператора определения «сопоставлений мостов провайдера», которые сопоставляют имя сети провайдера с физическим интерфейсом. Эти сопоставления мостов провайдера обеспечивают гибкость и абстрактные имена физических интерфейсов при создании сетей провайдера.

Пример Open vSwitch/OVN:

bridge_mappings = physnet1:br-ex

OpenStack-Ansible предоставляет два переопределения при назначении сетей провайдера, которые можно использовать для создания отображений и, в некоторых случаях, для подключения физических интерфейсов к мостам провайдера:

host_bind_overridenetwork_interface

Ключ host_bind_override используется для замены имени интерфейса, связанного с LXC, на имя физического интерфейса при развертывании компонента на хостах bare metal. Он будет использоваться для заполнения network_mappings для Neutron.

Переопределение network_interface используется для развертываний на основе Open vSwitch и OVN и требует имени физического интерфейса, который будет подключен к мосту провайдера (например, br-ex) для плоского и vlan трафика провайдера и проектных сетей.

Примечание

Предыдущие версии OpenStack-Ansible использовали мост с именем br-vlan для плоского и vlan трафика провайдера и проектных сетей. Мост br-vlan является остатком контейнерных агентов Neutron и больше не полезен и не рекомендуется.

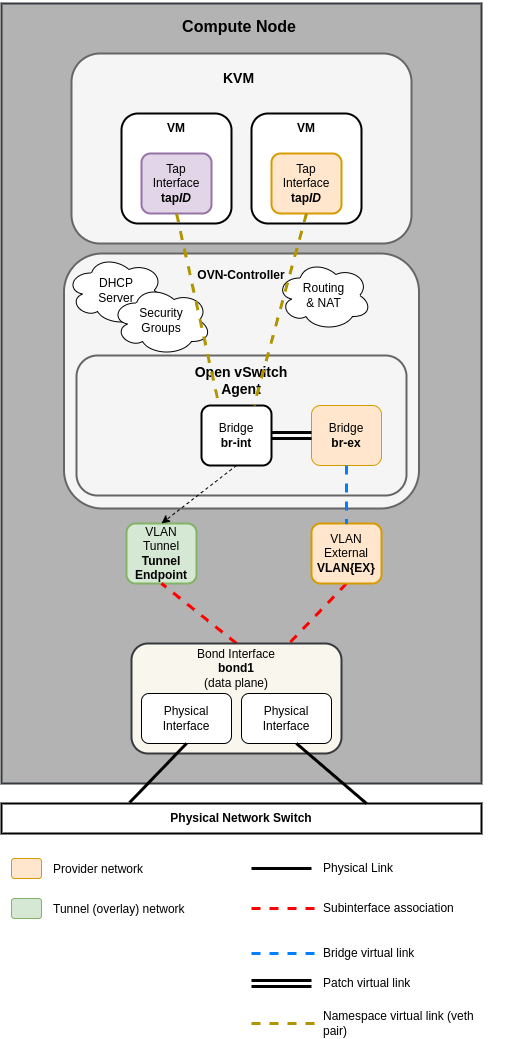

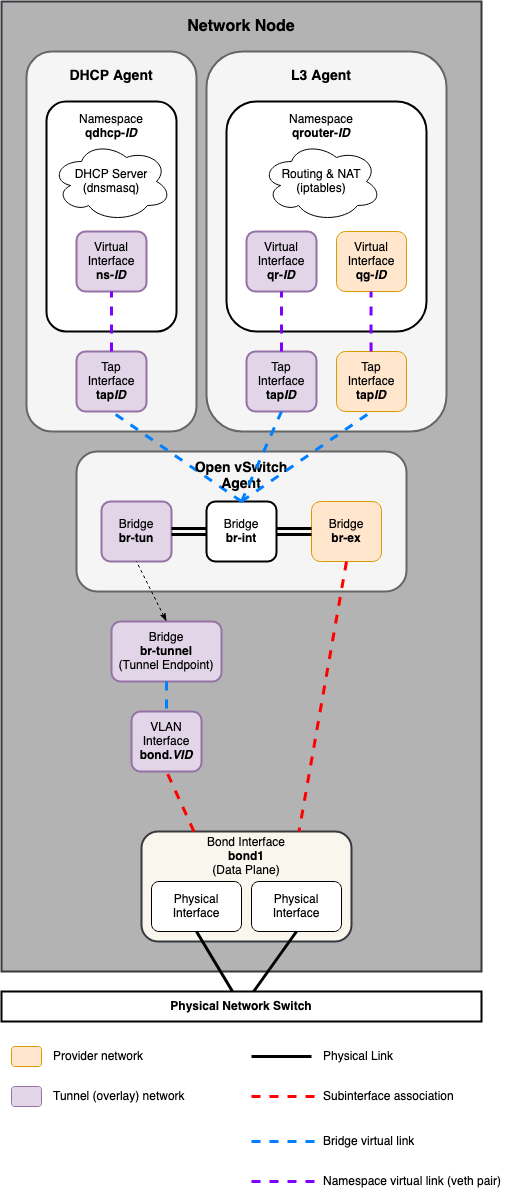

На следующих схемах отражены различия в компоновке виртуальной сети для поддерживаемых сетевых архитектур.

Open Virtual Network (OVN)¶

Примечание

Драйвер ML2/OVN развернут по умолчанию, начиная с выпуска Zed OpenStack-Ansible.

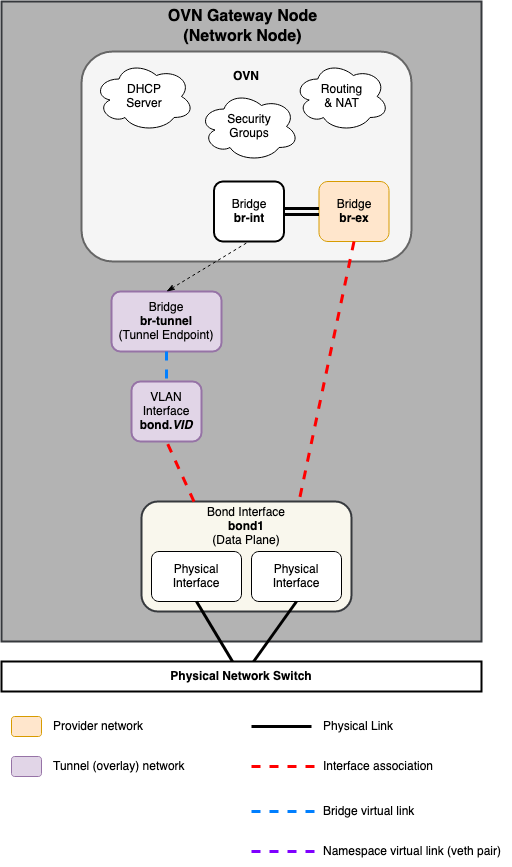

Сетевой узел¶

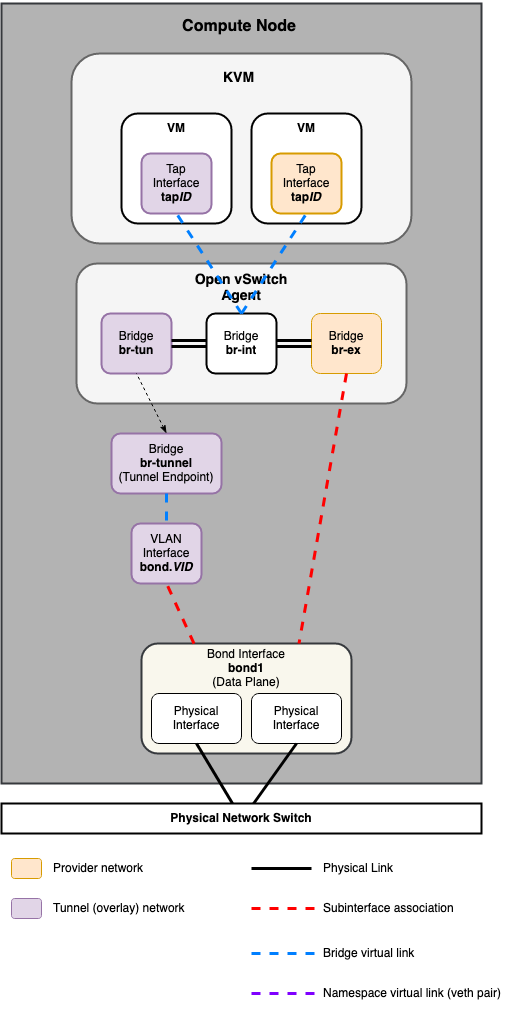

Вычислительный узел¶

Open vSwitch (OVS)¶

Сетевой узел¶

Вычислительный узел¶