[ English | русский | Indonesia ]

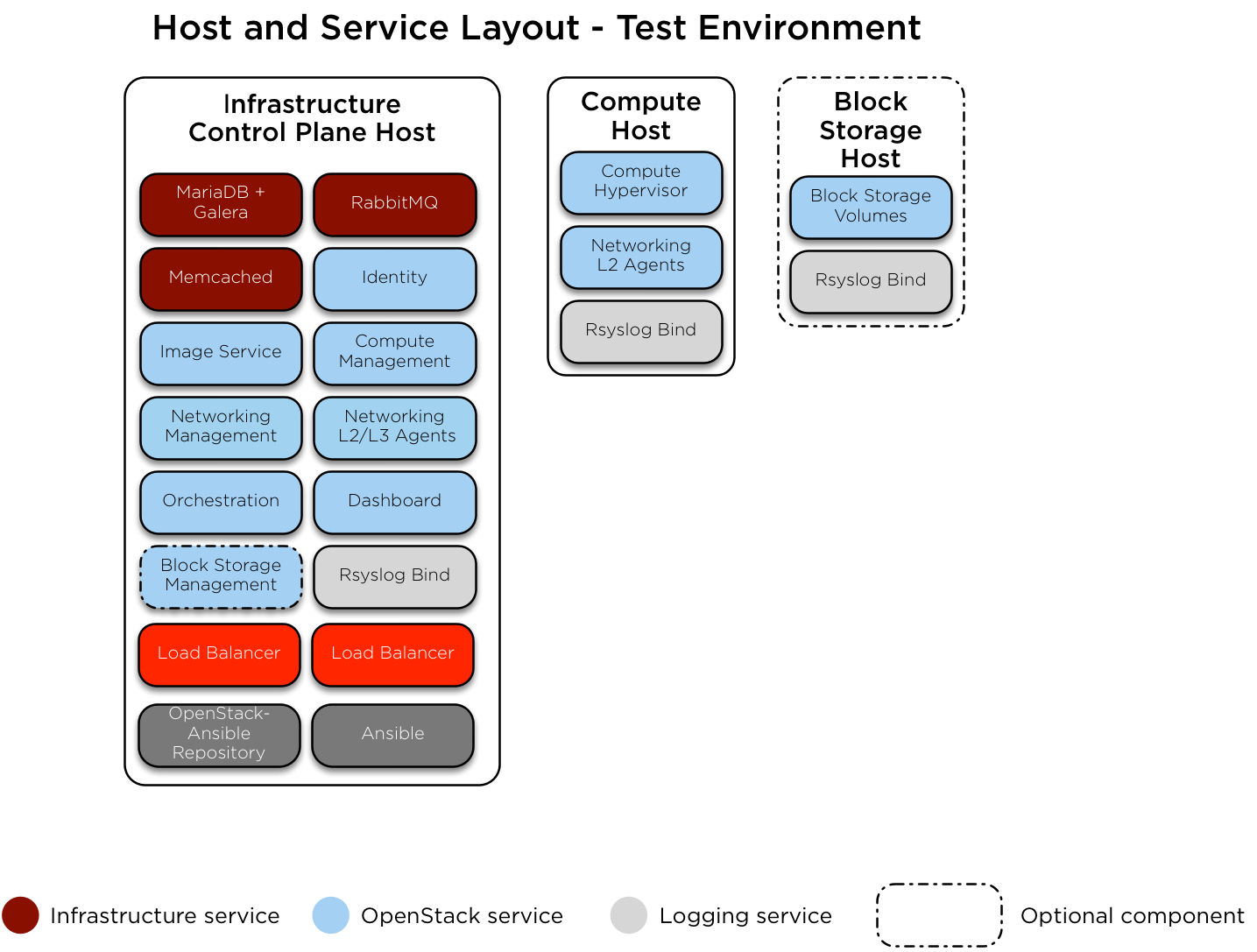

Test environment example¶

Here is an example test environment for a working OpenStack-Ansible (OSA) deployment with a small number of servers.

This example environment has the following characteristics:

One infrastructure (control plane) host (8 vCPU, 8 GB RAM, 60 GB HDD)

One compute host (8 vCPU, 8 GB RAM, 60 GB HDD)

One Network Interface Card (NIC) for each host

A basic compute kit environment, with the Image (glance) and Compute (nova) services set to use file-backed storage.

Internet access via the router address 172.29.236.1 on the Management Network

Network configuration¶

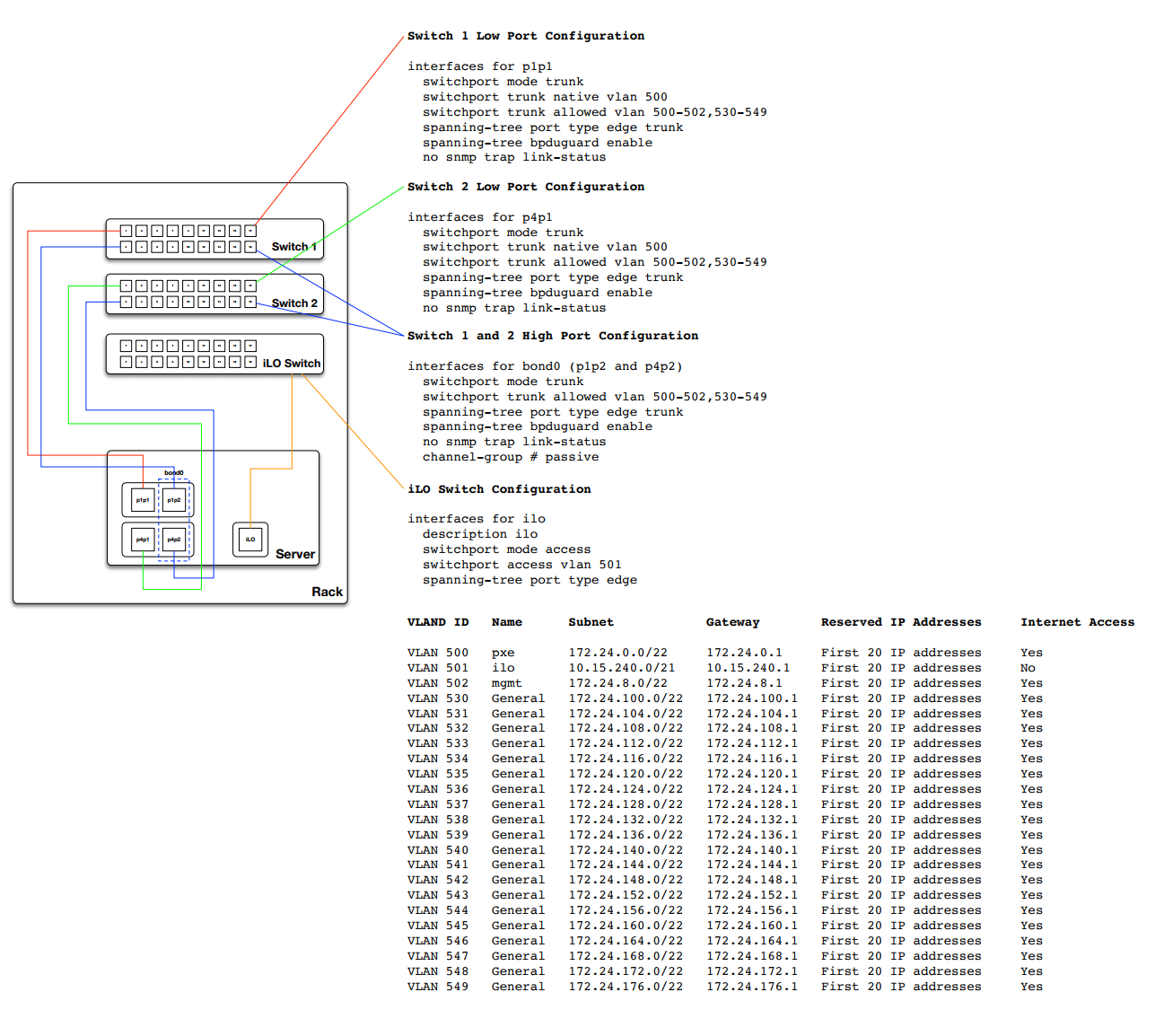

Switch port configuration¶

The following example provides a good reference for switch configuration and cab layout. This example may be more that what is required for basic setups however it can be adjusted to just about any configuration. Additionally you will need to adjust the VLANS noted within this example to match your environment.

Network CIDR/VLAN assignments¶

The following CIDR and VLAN assignments are used for this environment.

Network |

CIDR |

VLAN |

|---|---|---|

Management Network |

172.29.236.0/22 |

10 |

Tunnel (VXLAN) Network |

172.29.240.0/22 |

30 |

Storage Network |

172.29.244.0/22 |

20 |

IP assignments¶

The following host name and IP address assignments are used for this environment.

Host name |

Management IP |

Tunnel (VXLAN) IP |

Storage IP |

|---|---|---|---|

infra1 |

172.29.236.11 |

172.29.240.11 |

|

compute1 |

172.29.236.12 |

172.29.240.12 |

172.29.244.12 |

storage1 |

172.29.236.13 |

172.29.244.13 |

Host network configuration¶

Each host will require the correct network bridges to be implemented. The

following is the /etc/network/interfaces file for infra1.

Note

If your environment does not have eth0, but instead has p1p1 or

some other interface name, ensure that all references to eth0 in all

configuration files are replaced with the appropriate name. The same

applies to additional network interfaces.

# This is a single-NIC configuration to implement the required bridges

# for OpenStack-Ansible. This illustrates the configuration of the first

# Infrastructure host and the IP addresses assigned should be adapted

# for implementation on the other hosts.

#

# After implementing this configuration, the host will need to be

# rebooted.

# Physical interface

auto eth0

iface eth0 inet manual

# Container/Host management VLAN interface

auto eth0.10

iface eth0.10 inet manual

vlan-raw-device eth0

# OpenStack Networking VXLAN (tunnel/overlay) VLAN interface

auto eth0.30

iface eth0.30 inet manual

vlan-raw-device eth0

# Storage network VLAN interface (optional)

auto eth0.20

iface eth0.20 inet manual

vlan-raw-device eth0

# Container/Host management bridge

auto br-mgmt

iface br-mgmt inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0.10

address 172.29.236.11

netmask 255.255.252.0

gateway 172.29.236.1

dns-nameservers 8.8.8.8 8.8.4.4

# Bind the External VIP

auto br-mgmt:0

iface br-mgmt:0 inet static

address 172.29.236.10

netmask 255.255.252.0

# OpenStack Networking VXLAN (tunnel/overlay) bridge

#

# The COMPUTE, NETWORK and INFRA nodes must have an IP address

# on this bridge.

#

auto br-vxlan

iface br-vxlan inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0.30

address 172.29.240.11

netmask 255.255.252.0

# OpenStack Networking VLAN bridge

auto br-vlan

iface br-vlan inet manual

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0

# compute1 Network VLAN bridge

#auto br-vlan

#iface br-vlan inet manual

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

#

# For tenant vlan support, create a veth pair to be used when the neutron

# agent is not containerized on the compute hosts. 'eth12' is the value used on

# the host_bind_override parameter of the br-vlan network section of the

# openstack_user_config example file. The veth peer name must match the value

# specified on the host_bind_override parameter.

#

# When the neutron agent is containerized it will use the container_interface

# value of the br-vlan network, which is also the same 'eth12' value.

#

# Create veth pair, do not abort if already exists

# pre-up ip link add br-vlan-veth type veth peer name eth12 || true

# Set both ends UP

# pre-up ip link set br-vlan-veth up

# pre-up ip link set eth12 up

# Delete veth pair on DOWN

# post-down ip link del br-vlan-veth || true

# bridge_ports eth0 br-vlan-veth

# Storage bridge (optional)

#

# Only the COMPUTE and STORAGE nodes must have an IP address

# on this bridge. When used by infrastructure nodes, the

# IP addresses are assigned to containers which use this

# bridge.

#

auto br-storage

iface br-storage inet manual

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0.20

# compute1 Storage bridge

#auto br-storage

#iface br-storage inet static

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

# bridge_ports eth0.20

# address 172.29.244.12

# netmask 255.255.252.0

Deployment configuration¶

Environment layout¶

The /etc/openstack_deploy/openstack_user_config.yml file defines the

environment layout.

The following configuration describes the layout for this environment.

---

cidr_networks:

management: 172.29.236.0/22

tunnel: 172.29.240.0/22

storage: 172.29.244.0/22

used_ips:

- "172.29.236.1,172.29.236.50"

- "172.29.240.1,172.29.240.50"

- "172.29.244.1,172.29.244.50"

- "172.29.248.1,172.29.248.50"

global_overrides:

# The internal and external VIP should be different IPs, however they

# do not need to be on separate networks.

external_lb_vip_address: 172.29.236.10

internal_lb_vip_address: 172.29.236.11

management_bridge: "br-mgmt"

provider_networks:

- network:

container_bridge: "br-mgmt"

container_type: "veth"

container_interface: "eth1"

ip_from_q: "management"

type: "raw"

group_binds:

- all_containers

- hosts

is_management_address: true

- network:

container_bridge: "br-vxlan"

container_type: "veth"

container_interface: "eth10"

ip_from_q: "tunnel"

type: "vxlan"

range: "1:1000"

net_name: "vxlan"

group_binds:

- neutron_openvswitch_agent

- network:

container_bridge: "br-vlan"

container_type: "veth"

container_interface: "eth12"

host_bind_override: "eth12"

type: "flat"

net_name: "physnet1"

group_binds:

- neutron_openvswitch_agent

- network:

container_bridge: "br-vlan"

container_type: "veth"

container_interface: "eth11"

type: "vlan"

range: "101:200,301:400"

net_name: "physnet2"

group_binds:

- neutron_openvswitch_agent

- network:

container_bridge: "br-storage"

container_type: "veth"

container_interface: "eth2"

ip_from_q: "storage"

type: "raw"

group_binds:

- glance_api

- cinder_api

- cinder_volume

- nova_compute

###

### Infrastructure

###

# galera, memcache, rabbitmq, utility

shared_infra_hosts:

infra1:

ip: 172.29.236.11

# repository (apt cache, python packages, etc)

repo_infra_hosts:

infra1:

ip: 172.29.236.11

# load balancer

load_balancer_hosts:

infra1:

ip: 172.29.236.11

###

### OpenStack

###

# keystone

identity_hosts:

infra1:

ip: 172.29.236.11

# cinder api services

storage_infra_hosts:

infra1:

ip: 172.29.236.11

# glance

image_hosts:

infra1:

ip: 172.29.236.11

# placement

placement_infra_hosts:

infra1:

ip: 172.29.236.11

# nova api, conductor, etc services

compute_infra_hosts:

infra1:

ip: 172.29.236.11

# heat

orchestration_hosts:

infra1:

ip: 172.29.236.11

# horizon

dashboard_hosts:

infra1:

ip: 172.29.236.11

# neutron server, agents (L3, etc)

network_hosts:

infra1:

ip: 172.29.236.11

# nova hypervisors

compute_hosts:

compute1:

ip: 172.29.236.12

# cinder storage host (LVM-backed)

storage_hosts:

storage1:

ip: 172.29.236.13

container_vars:

cinder_backends:

limit_container_types: cinder_volume

lvm:

volume_group: cinder-volumes

volume_driver: cinder.volume.drivers.lvm.LVMVolumeDriver

volume_backend_name: LVM_iSCSI

iscsi_ip_address: "172.29.244.13"

Environment customizations¶

The optionally deployed files in /etc/openstack_deploy/env.d allow the

customization of Ansible groups. This allows the deployer to set whether

the services will run in a container (the default), or on the host (on

metal).

For this environment you do not need the /etc/openstack_deploy/env.d

folder as the defaults set by OpenStack-Ansible are suitable.

User variables¶

The /etc/openstack_deploy/user_variables.yml file defines the global

overrides for the default variables.

For this environment, if you want to use the same IP address for the internal and external endpoints, you will need to ensure that the internal and public OpenStack endpoints are served with the same protocol. This is done with the following content:

---

# This file contains an example of the global variable overrides

# which may need to be set for a production environment.

## OpenStack public endpoint protocol

openstack_service_publicuri_proto: http