[ English | 한국어 (대한민국) | English (United Kingdom) | Indonesia | français | русский | Deutsch ]

Contoh lingkungan uji¶

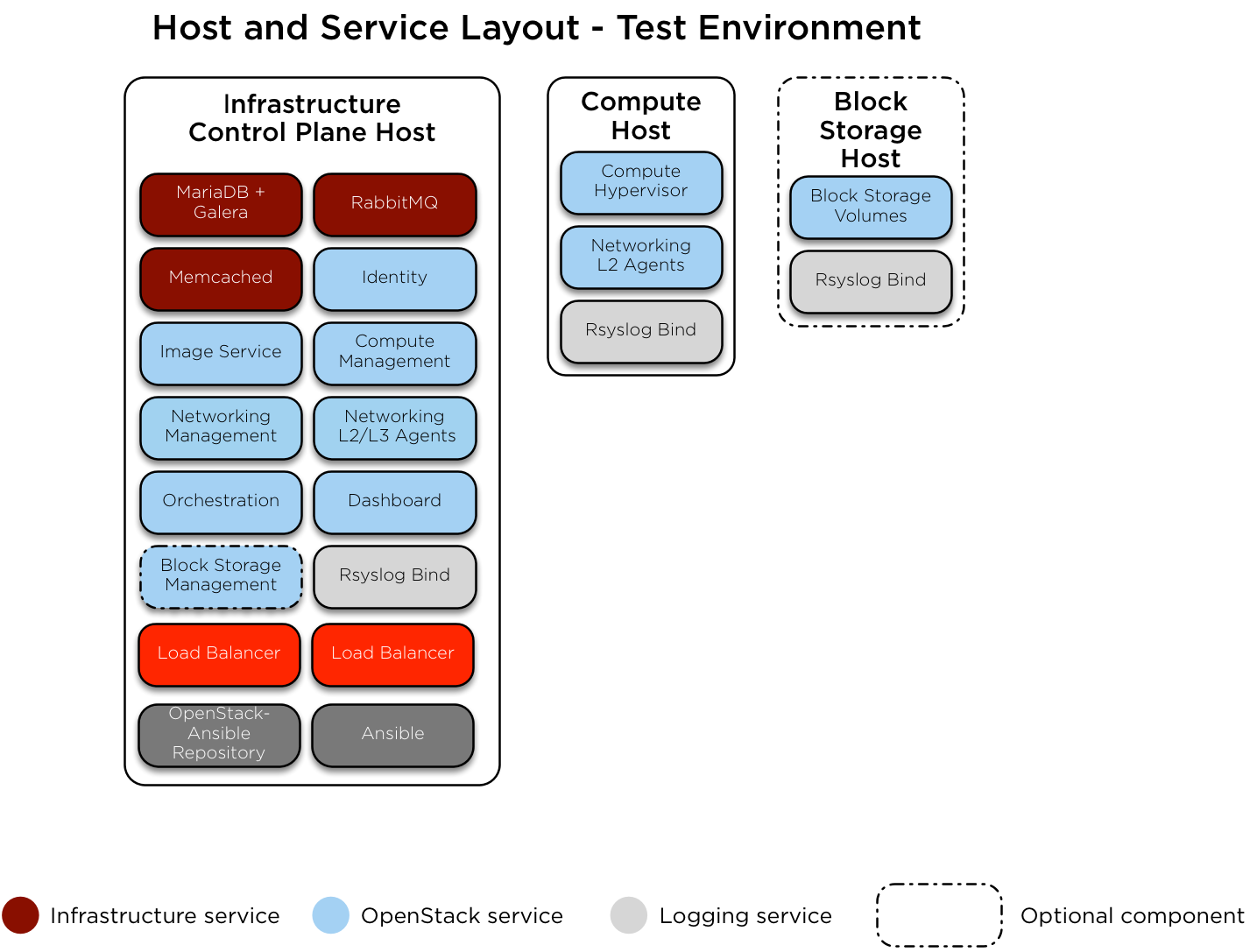

Berikut ini adalah contoh lingkungan pengujian untuk penyebaran OpenStack-Ansible (OSA) yang berfungsi dengan sejumlah sedikit server.

Lingkungan contoh ini memiliki karakteristik sebagai berikut:

Satu host infrastruktur (control plane) (8 vCPU, 8 GB RAM, 60 GB HDD)

Satu host komputasi (8 vCPU, 8 GB RAM, 60 GB HDD)

Satu Network Interface Card (NIC) untuk setiap host

Lingkungan kit komputasi dasar, dengan layanan Image (glance) dan Compute (nova) diatur untuk menggunakan penyimpanan file-backed.

Akses internet melalui alamat router 172.29.236.1 di Management Network

Konfigurasi jaringan¶

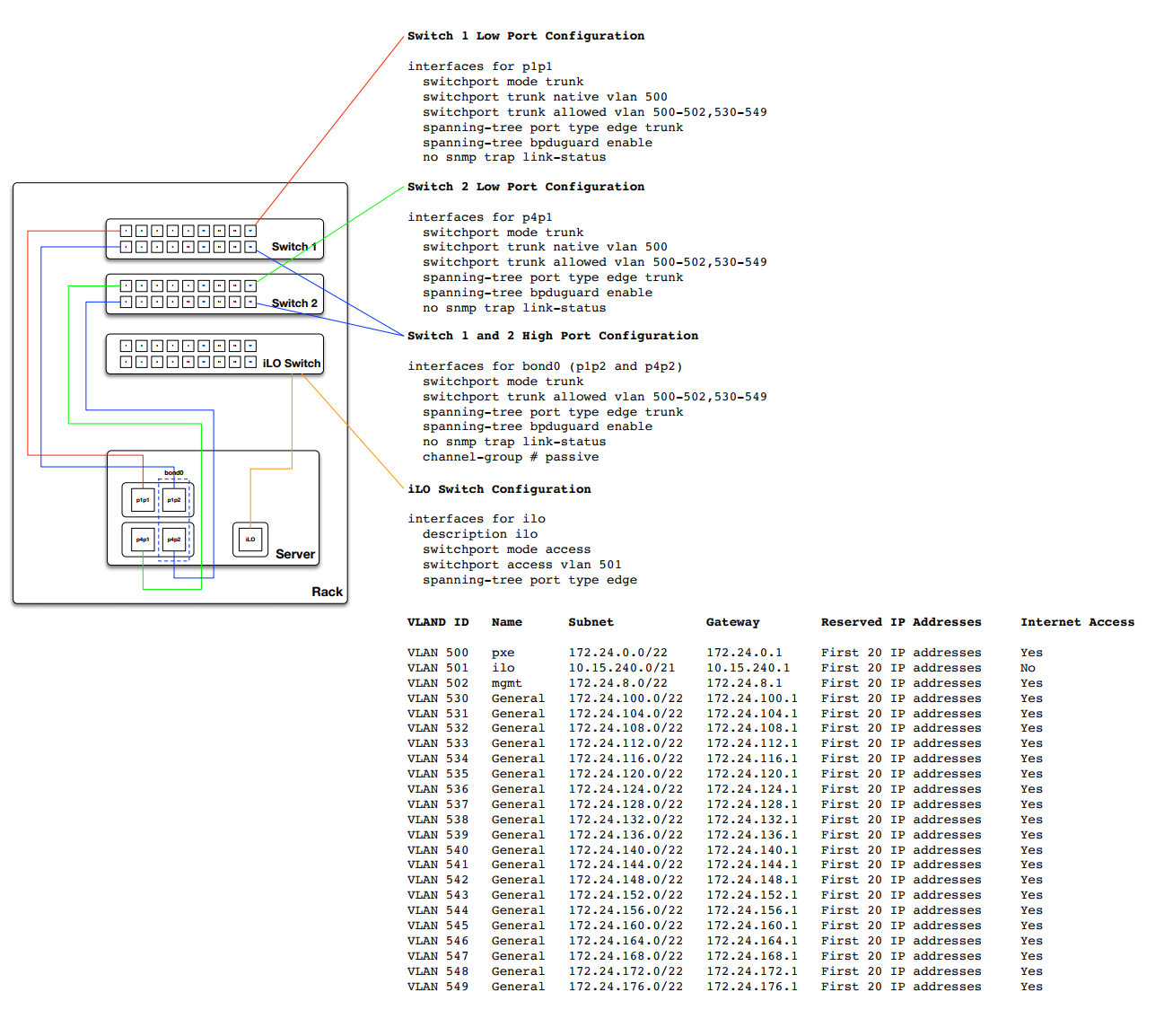

Konfigurasi port switch¶

Contoh berikut memberikan referensi yang baik untuk konfigurasi switch dan tata letak cab. Contoh ini mungkin lebih dari apa yang diperlukan untuk pengaturan dasar namun dapat disesuaikan dengan konfigurasi apa pun. Selain itu Anda perlu menyesuaikan VLANS yang tercantum dalam contoh ini agar sesuai dengan lingkungan Anda.

Penetapan CIDR/VLAN jaringan¶

Penetapan CIDR dan VLAN berikut digunakan untuk lingkungan ini.

Network |

CIDR |

VLAN |

|---|---|---|

Management Network |

172.29.236.0/22 |

10 |

Tunnel (VXLAN) Network |

172.29.240.0/22 |

30 |

Storage Network (jaringan penyimpanan) |

172.29.244.0/22 |

20 |

IP assignments¶

Nama host dan alamat IP berikut digunakan untuk lingkungan ini.

Host name |

Management IP |

Tunnel (VxLAN) IP |

Storage IP |

|---|---|---|---|

infra1 |

172.29.236.11 |

172.29.240.11 |

|

compute1 |

172.29.236.12 |

172.29.240.12 |

172.29.244.12 |

storage1 |

172.29.236.13 |

172.29.244.13 |

Konfigurasi jaringan host¶

Setiap host akan membutuhkan jembatan jaringan (network bridge) yang benar untuk diimplementasikan. Berikut ini adalah file /etc/network/interfaces untuk infra1.

Catatan

Jika lingkungan Anda tidak memiliki eth0, tetapi sebaliknya memiliki p1p1 atau nama antarmuka lainnya, pastikan bahwa semua referensi ke eth0 di semua file konfigurasi diganti dengan nama yang sesuai. Hal yang sama berlaku untuk antarmuka jaringan tambahan.

# This is a single-NIC configuration to implement the required bridges

# for OpenStack-Ansible. This illustrates the configuration of the first

# Infrastructure host and the IP addresses assigned should be adapted

# for implementation on the other hosts.

#

# After implementing this configuration, the host will need to be

# rebooted.

# Physical interface

auto eth0

iface eth0 inet manual

# Container/Host management VLAN interface

auto eth0.10

iface eth0.10 inet manual

vlan-raw-device eth0

# OpenStack Networking VXLAN (tunnel/overlay) VLAN interface

auto eth0.30

iface eth0.30 inet manual

vlan-raw-device eth0

# Storage network VLAN interface (optional)

auto eth0.20

iface eth0.20 inet manual

vlan-raw-device eth0

# Container/Host management bridge

auto br-mgmt

iface br-mgmt inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0.10

address 172.29.236.11

netmask 255.255.252.0

gateway 172.29.236.1

dns-nameservers 8.8.8.8 8.8.4.4

# Bind the External VIP

auto br-mgmt:0

iface br-mgmt:0 inet static

address 172.29.236.10

netmask 255.255.252.0

# OpenStack Networking VXLAN (tunnel/overlay) bridge

#

# The COMPUTE, NETWORK and INFRA nodes must have an IP address

# on this bridge.

#

auto br-vxlan

iface br-vxlan inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0.30

address 172.29.240.11

netmask 255.255.252.0

# OpenStack Networking VLAN bridge

auto br-vlan

iface br-vlan inet manual

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0

# compute1 Network VLAN bridge

#auto br-vlan

#iface br-vlan inet manual

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

#

# For tenant vlan support, create a veth pair to be used when the neutron

# agent is not containerized on the compute hosts. 'eth12' is the value used on

# the host_bind_override parameter of the br-vlan network section of the

# openstack_user_config example file. The veth peer name must match the value

# specified on the host_bind_override parameter.

#

# When the neutron agent is containerized it will use the container_interface

# value of the br-vlan network, which is also the same 'eth12' value.

#

# Create veth pair, do not abort if already exists

# pre-up ip link add br-vlan-veth type veth peer name eth12 || true

# Set both ends UP

# pre-up ip link set br-vlan-veth up

# pre-up ip link set eth12 up

# Delete veth pair on DOWN

# post-down ip link del br-vlan-veth || true

# bridge_ports eth0 br-vlan-veth

# Storage bridge (optional)

#

# Only the COMPUTE and STORAGE nodes must have an IP address

# on this bridge. When used by infrastructure nodes, the

# IP addresses are assigned to containers which use this

# bridge.

#

auto br-storage

iface br-storage inet manual

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports eth0.20

# compute1 Storage bridge

#auto br-storage

#iface br-storage inet static

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

# bridge_ports eth0.20

# address 172.29.244.12

# netmask 255.255.252.0

Konfigurasi penempatan (deployment)¶

Tata letak lingkungan¶

File /etc/openstack_deploy/openstack_user_config.yml mendefinisikan tata letak lingkungan.

Konfigurasi berikut menjelaskan tata letak untuk lingkungan ini.

---

cidr_networks:

container: 172.29.236.0/22

tunnel: 172.29.240.0/22

storage: 172.29.244.0/22

used_ips:

- "172.29.236.1,172.29.236.50"

- "172.29.240.1,172.29.240.50"

- "172.29.244.1,172.29.244.50"

- "172.29.248.1,172.29.248.50"

global_overrides:

# The internal and external VIP should be different IPs, however they

# do not need to be on separate networks.

external_lb_vip_address: 172.29.236.10

internal_lb_vip_address: 172.29.236.11

management_bridge: "br-mgmt"

provider_networks:

- network:

container_bridge: "br-mgmt"

container_type: "veth"

container_interface: "eth1"

ip_from_q: "container"

type: "raw"

group_binds:

- all_containers

- hosts

is_container_address: true

- network:

container_bridge: "br-vxlan"

container_type: "veth"

container_interface: "eth10"

ip_from_q: "tunnel"

type: "vxlan"

range: "1:1000"

net_name: "vxlan"

group_binds:

- neutron_linuxbridge_agent

- network:

container_bridge: "br-vlan"

container_type: "veth"

container_interface: "eth12"

host_bind_override: "eth12"

type: "flat"

net_name: "flat"

group_binds:

- neutron_linuxbridge_agent

- network:

container_bridge: "br-vlan"

container_type: "veth"

container_interface: "eth11"

type: "vlan"

range: "101:200,301:400"

net_name: "vlan"

group_binds:

- neutron_linuxbridge_agent

- network:

container_bridge: "br-storage"

container_type: "veth"

container_interface: "eth2"

ip_from_q: "storage"

type: "raw"

group_binds:

- glance_api

- cinder_api

- cinder_volume

- nova_compute

###

### Infrastructure

###

# galera, memcache, rabbitmq, utility

shared-infra_hosts:

infra1:

ip: 172.29.236.11

# repository (apt cache, python packages, etc)

repo-infra_hosts:

infra1:

ip: 172.29.236.11

# load balancer

haproxy_hosts:

infra1:

ip: 172.29.236.11

###

### OpenStack

###

# keystone

identity_hosts:

infra1:

ip: 172.29.236.11

# cinder api services

storage-infra_hosts:

infra1:

ip: 172.29.236.11

# glance

image_hosts:

infra1:

ip: 172.29.236.11

# placement

placement-infra_hosts:

infra1:

ip: 172.29.236.11

# nova api, conductor, etc services

compute-infra_hosts:

infra1:

ip: 172.29.236.11

# heat

orchestration_hosts:

infra1:

ip: 172.29.236.11

# horizon

dashboard_hosts:

infra1:

ip: 172.29.236.11

# neutron server, agents (L3, etc)

network_hosts:

infra1:

ip: 172.29.236.11

# nova hypervisors

compute_hosts:

compute1:

ip: 172.29.236.12

# cinder storage host (LVM-backed)

storage_hosts:

storage1:

ip: 172.29.236.13

container_vars:

cinder_backends:

limit_container_types: cinder_volume

lvm:

volume_group: cinder-volumes

volume_driver: cinder.volume.drivers.lvm.LVMVolumeDriver

volume_backend_name: LVM_iSCSI

iscsi_ip_address: "172.29.244.13"

Kustomisasi lingkungan¶

File yang disebarkan secara opsional di /etc/openstack_deploy/env.d memungkinkan kustomisasi grup Ansible. Ini memungkinkan deployer untuk mengatur apakah layanan akan berjalan dalam container (default), atau pada host (on metal).

Untuk lingkungan ini Anda tidak perlu folder /etc/openstack_deploy/env.d karena standar yang ditetapkan oleh OpenStack-Ansible cocok.

User variables (variabel pengguna)¶

File /etc/openstack_deploy/user_variables.yml mendefinisikan global override untuk variabel default.

Untuk lingkungan ini, jika Anda ingin menggunakan alamat IP yang sama untuk endpoint internal dan eksternal, Anda harus memastikan bahwa endpoint OpenStack internal dan publik dilayani dengan protokol yang sama. Ini dilakukan dengan konten berikut:

---

# This file contains an example of the global variable overrides

# which may need to be set for a production environment.

## OpenStack public endpoint protocol

openstack_service_publicuri_proto: http