[ English | Indonesia | русский ]

Arsitektur jaringan¶

OpenStack-Ansible mendukung sejumlah arsitektur jaringan yang berbeda, dan dapat digunakan menggunakan antarmuka jaringan tunggal untuk beban kerja non-produksi atau menggunakan beberapa antarmuka jaringan atau antarmuka terikat untuk beban kerja produksi.

Referensi arsitektur OpenStack-Ansible mengelompokkan lalu lintas menggunakan VLAN di beberapa antarmuka jaringan atau obligasi. Jaringan umum yang digunakan dalam penyebaran OpenStack-Ansible dapat diamati pada tabel berikut:

Network |

CIDR |

VLAN |

|---|---|---|

Management Network |

172.29.236.0/22 |

10 |

Jaringan overlay |

172.29.240.0/22 |

30 |

Storage Network (jaringan penyimpanan) |

172.29.244.0/22 |

20 |

Management Network, juga disebut sebagai container network, menyediakan manajemen dan komunikasi antara infrastruktur dan layanan OpenStack yang berjalan dalam container atau pada metal. Management network menggunakan VLAN khusus yang biasanya terhubung ke jembatan br-mgmt, dan juga dapat digunakan sebagai antarmuka utama yang digunakan untuk berinteraksi dengan server melalui SSH.

The Overlay Network, also referred to as the tunnel network,

provides connectivity between hosts for the purpose of tunnelling

encapsulated traffic using VXLAN, Geneve, or other protocols. The

overlay network uses a dedicated VLAN typically connected to the

br-vxlan bridge.

Storage Network menyediakan akses terpisah ke Block Storage dari layanan OpenStack seperti Cinder dan Glance. Storage network menggunakan VLAN khusus yang biasanya terhubung ke jembatan br-storage.

Catatan

CIDR dan VLAN yang terdaftar untuk setiap jaringan adalah contoh dan mungkin berbeda di lingkungan Anda.

VLAN tambahan mungkin diperlukan untuk tujuan berikut:

Jaringan penyedia eksternal untuk Floating IP and instance

Self-service project networks for instances

Layanan OpenStack lainnya

Antarmuka jaringan¶

Configuring network interfaces¶

OpenStack-Ansible does not mandate any specific method of configuring network interfaces on the host. You may choose any tool, such as ifupdown, netplan, systemd-networkd, networkmanager or another operating-system specific tool. The only requirement is that a set of functioning network bridges and interfaces are created which match those expected by OpenStack-Ansible, plus any that you choose to specify for neutron physical interfaces.

A selection of network configuration example files are given in the etc/network and etc/netplan for Ubuntu systems, and in etc/NetworkManager for RHEL-based (or others) systems. It is expected that these will need adjustment for the specific requirements of each deployment.

If you want to delegate management of network bridges and interfaces to

OpenStack-Ansible, you can define variables

openstack_hosts_systemd_networkd_devices and

openstack_hosts_systemd_networkd_networks in group_vars/lxc_hosts,

for example:

openstack_hosts_systemd_networkd_devices:

- NetDev:

Name: vlan-mgmt

Kind: vlan

VLAN:

Id: 10

- NetDev:

Name: "{{ management_bridge }}"

Kind: bridge

Bridge:

ForwardDelaySec: 0

HelloTimeSec: 2

MaxAgeSec: 12

STP: off

openstack_hosts_systemd_networkd_networks:

- interface: "vlan-mgmt"

bridge: "{{ management_bridge }}"

- interface: "{{ management_bridge }}"

address: "{{ management_address }}"

netmask: "255.255.252.0"

gateway: "172.29.236.1"

- interface: "eth0"

vlan:

- "vlan-mgmt"

# NOTE: `05` is prefixed to filename to have precedence over netplan

filename: 05-lxc-net-eth0

address: "{{ ansible_facts['eth0']['ipv4']['address'] }}"

netmask: "{{ ansible_facts['eth0']['ipv4']['netmask'] }}"

If you need to run some pre/post hooks for interfaces, you will need to

configure a systemd service for that. It can be done using variable

openstack_hosts_systemd_services, like that:

openstack_hosts_systemd_services:

- service_name: "{{ management_bridge }}-hook"

state: started

enabled: yes

service_type: oneshot

execstarts:

- /bin/bash -c "/bin/echo 'management bridge is available'"

config_overrides:

Unit:

Wants: network-online.target

After: "{{ sys-subsystem-net-devices-{{ management_bridge }}.device }}"

BindsTo: "{{ sys-subsystem-net-devices-{{ management_bridge }}.device }}"

Setting an MTU on a network interface¶

Larger MTU’s can be useful on certain networks, especially storage networks.

Add a container_mtu attribute within the provider_networks dictionary to set

a custom MTU on the container network interfaces that attach to a particular

network:

provider_networks:

- network:

group_binds:

- glance_api

- cinder_api

- cinder_volume

- nova_compute

type: "raw"

container_bridge: "br-storage"

container_interface: "eth2"

container_type: "veth"

container_mtu: "9000"

ip_from_q: "storage"

static_routes:

- cidr: 10.176.0.0/12

gateway: 172.29.248.1

The example above enables jumbo frames by setting the MTU on the storage network to 9000.

Catatan

It's important to ensure that the MTU is consistently set across the entire network path. This includes not only the container interfaces but also the underlying bridge, physical NICs, and any connected network equipment like switches, routers, and storage devices. Inconsistent MTU settings can lead to fragmentation or dropped packets, which can severely impact performance.

Antarmuka tunggal atau rangkaian¶

OpenStack-Ansible mendukung penggunaan antarmuka tunggal atau serangkaian antarmuka terikat yang membawa lalu lintas untuk layanan OpenStack dan juga instance.

Open Virtual Network (OVN)¶

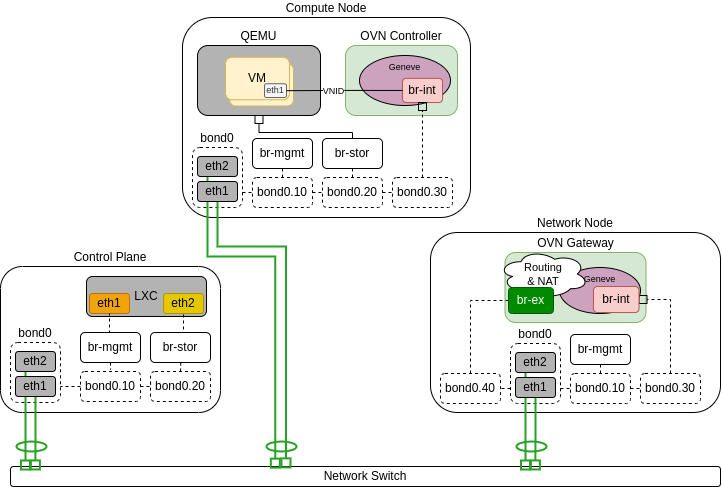

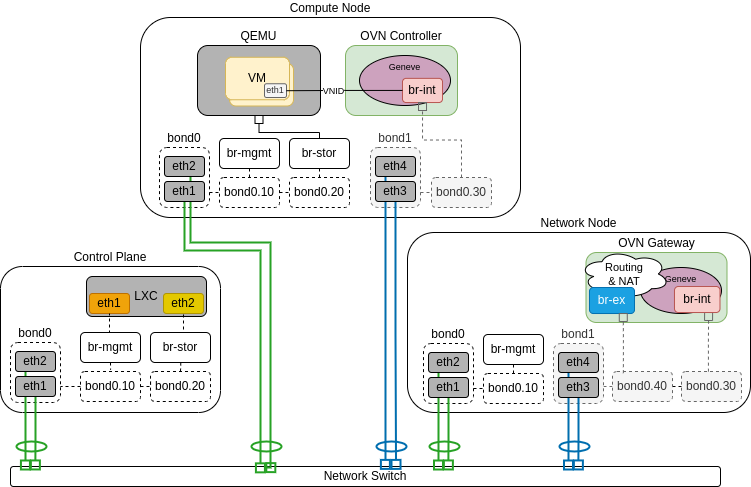

The following diagrams demonstrate hosts using a single bond with OVN.

In the scenario below only Network node is connected to external network and computes do not have external connectivity, so routers are needed for external connectivity:

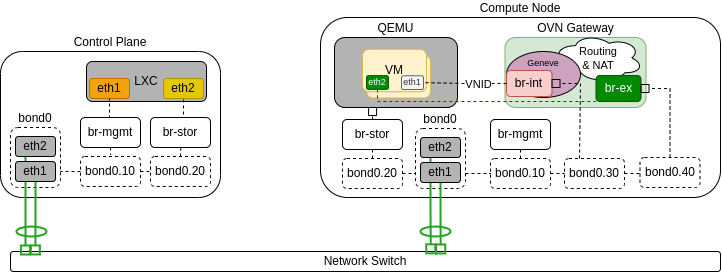

The following diagram demonstrates a compute node serving as an OVN gatway. It is connected to the public network, which enables to connect VMs to public networks not only through routers, but also directly:

Open vSwitch¶

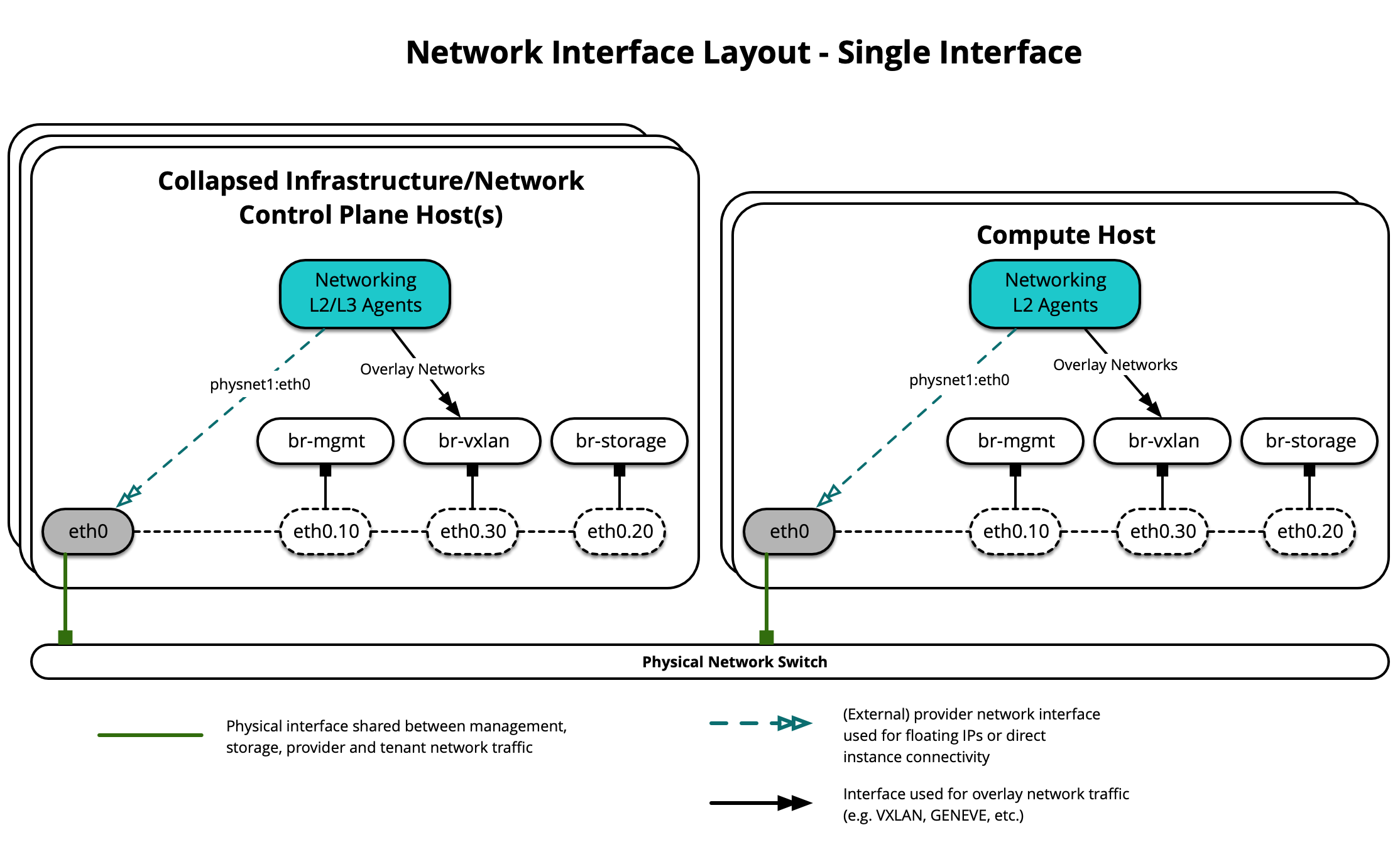

The following diagram demonstrates hosts using a single interface for OVS Scenario:

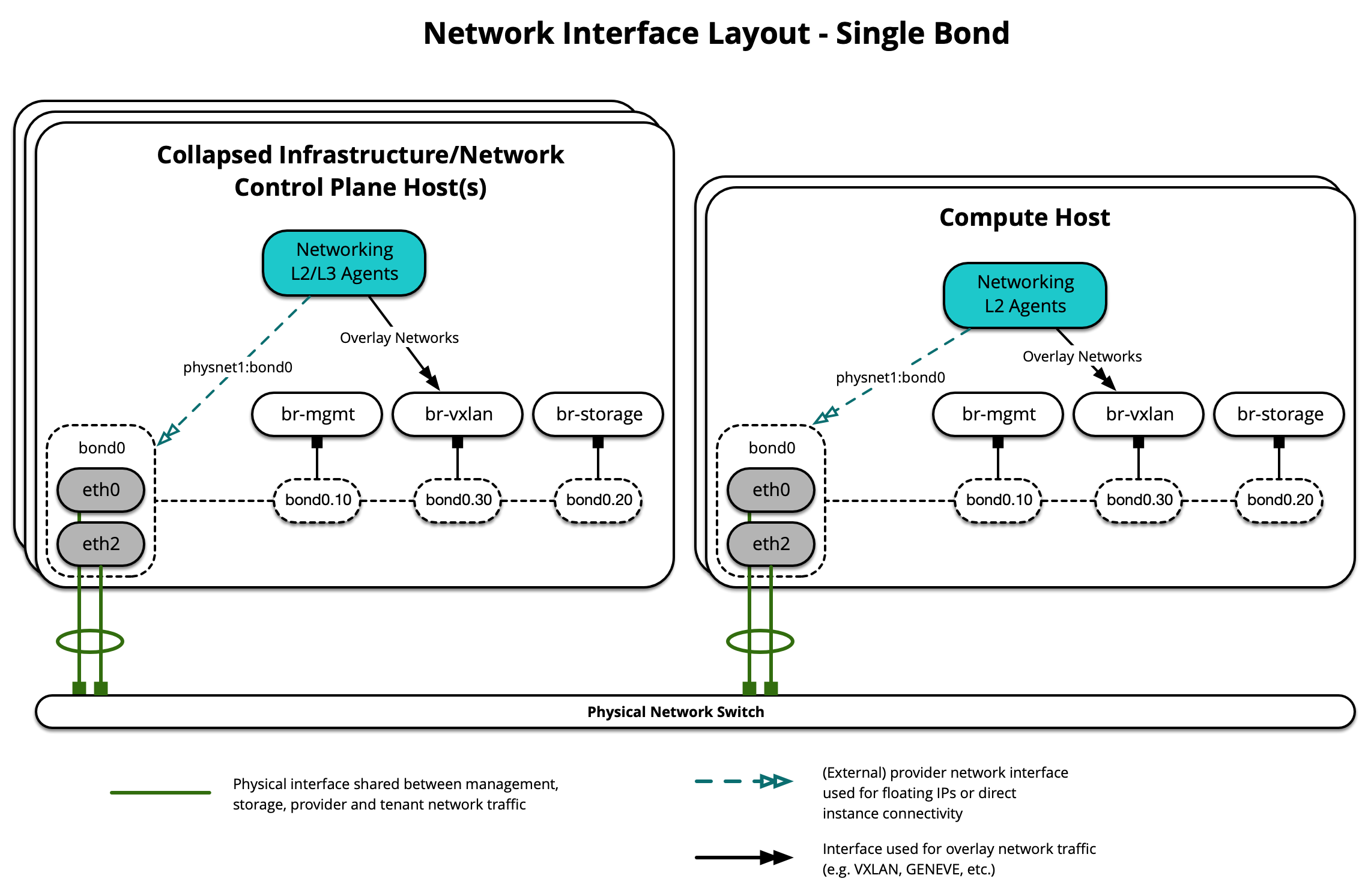

Diagram berikut menunjukkan host menggunakan ikatan tunggal:

Setiap host akan membutuhkan jembatan jaringan yang benar untuk diimplementasikan. Berikut ini adalah file /etc/network/interfaces untuk infra1 menggunakan satu ikatan.

Catatan

Jika lingkungan Anda tidak memiliki eth0, tetapi sebaliknya memiliki p1p1 atau nama antarmuka lainnya, pastikan bahwa semua referensi ke eth0 di semua file konfigurasi diganti dengan nama yang sesuai. Hal yang sama berlaku untuk antarmuka jaringan tambahan.

# This is a multi-NIC bonded configuration to implement the required bridges

# for OpenStack-Ansible. This illustrates the configuration of the first

# Infrastructure host and the IP addresses assigned should be adapted

# for implementation on the other hosts.

#

# After implementing this configuration, the host will need to be

# rebooted.

# Assuming that eth0/1 and eth2/3 are dual port NIC's we pair

# eth0 with eth2 for increased resiliency in the case of one interface card

# failing.

auto eth0

iface eth0 inet manual

bond-master bond0

bond-primary eth0

auto eth1

iface eth1 inet manual

auto eth2

iface eth2 inet manual

bond-master bond0

auto eth3

iface eth3 inet manual

# Create a bonded interface. Note that the "bond-slaves" is set to none. This

# is because the bond-master has already been set in the raw interfaces for

# the new bond0.

auto bond0

iface bond0 inet manual

bond-slaves none

bond-mode active-backup

bond-miimon 100

bond-downdelay 200

bond-updelay 200

# Container/Host management VLAN interface

auto bond0.10

iface bond0.10 inet manual

vlan-raw-device bond0

# OpenStack Networking VXLAN (tunnel/overlay) VLAN interface

auto bond0.30

iface bond0.30 inet manual

vlan-raw-device bond0

# Storage network VLAN interface (optional)

auto bond0.20

iface bond0.20 inet manual

vlan-raw-device bond0

# Container/Host management bridge

auto br-mgmt

iface br-mgmt inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports bond0.10

address 172.29.236.11

netmask 255.255.252.0

gateway 172.29.236.1

dns-nameservers 8.8.8.8 8.8.4.4

# OpenStack Networking VXLAN (tunnel/overlay) bridge

#

# Nodes hosting Neutron agents must have an IP address on this interface,

# including COMPUTE, NETWORK, and collapsed INFRA/NETWORK nodes.

#

auto br-vxlan

iface br-vxlan inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports bond0.30

address 172.29.240.16

netmask 255.255.252.0

# OpenStack Networking VLAN bridge

#

# The "br-vlan" bridge is no longer necessary for deployments unless Neutron

# agents are deployed in a container. Instead, a direct interface such as

# bond0 can be specified via the "host_bind_override" override when defining

# provider networks.

#

#auto br-vlan

#iface br-vlan inet manual

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

# bridge_ports bond0

# compute1 Network VLAN bridge

#auto br-vlan

#iface br-vlan inet manual

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

#

# Storage bridge (optional)

#

# Only the COMPUTE and STORAGE nodes must have an IP address

# on this bridge. When used by infrastructure nodes, the

# IP addresses are assigned to containers which use this

# bridge.

#

auto br-storage

iface br-storage inet manual

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports bond0.20

# compute1 Storage bridge

#auto br-storage

#iface br-storage inet static

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

# bridge_ports bond0.20

# address 172.29.244.16

# netmask 255.255.252.0

Berbagai antarmuka atau ikatan (bond)¶

OpenStack-Ansible mendukung penggunaan beberapa antarmuka atau set antarmuka terikat yang membawa lalu lintas untuk layanan dan instance OpenStack.

Open Virtual Network (OVN)¶

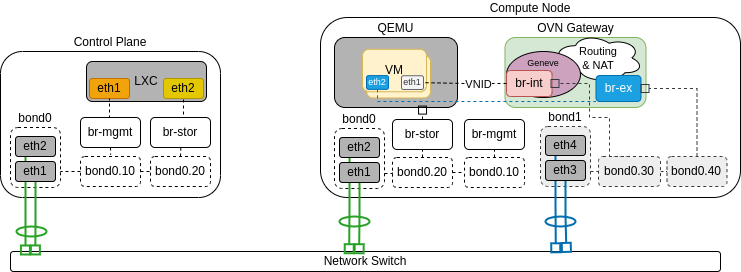

The following diagrams demonstrate hosts using multiple bonds with OVN.

In the scenario below only Network node is connected to external network and computes do not have external connectivity, so routers are needed for external connectivity:

The following diagram demonstrates a compute node serving as an OVN gatway. It is connected to the public network, which enables to connect VMs to public networks not only through routers, but also directly:

Open vSwitch¶

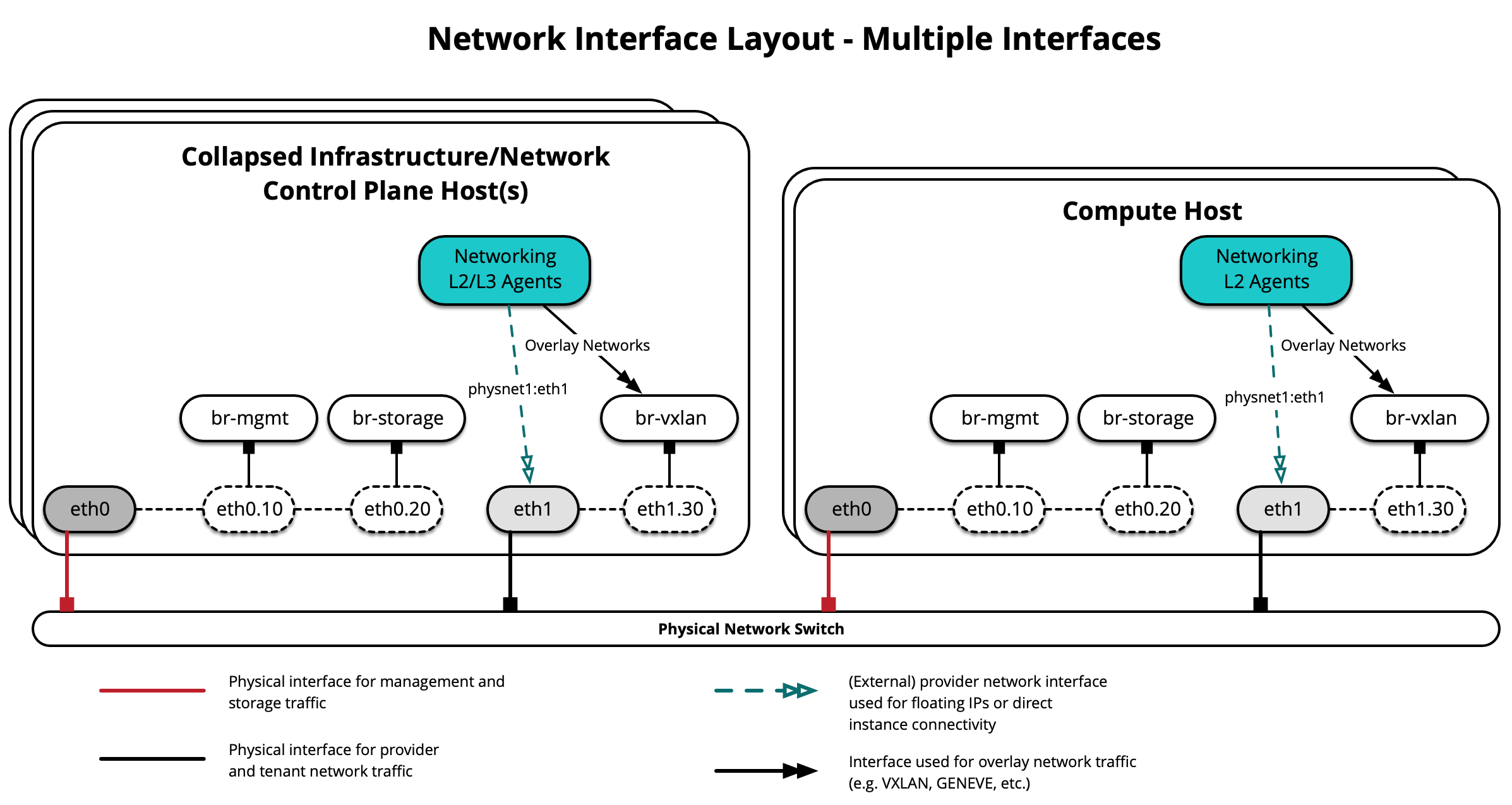

The following diagram demonstrates hosts using multiple interfaces for OVS Scenario:

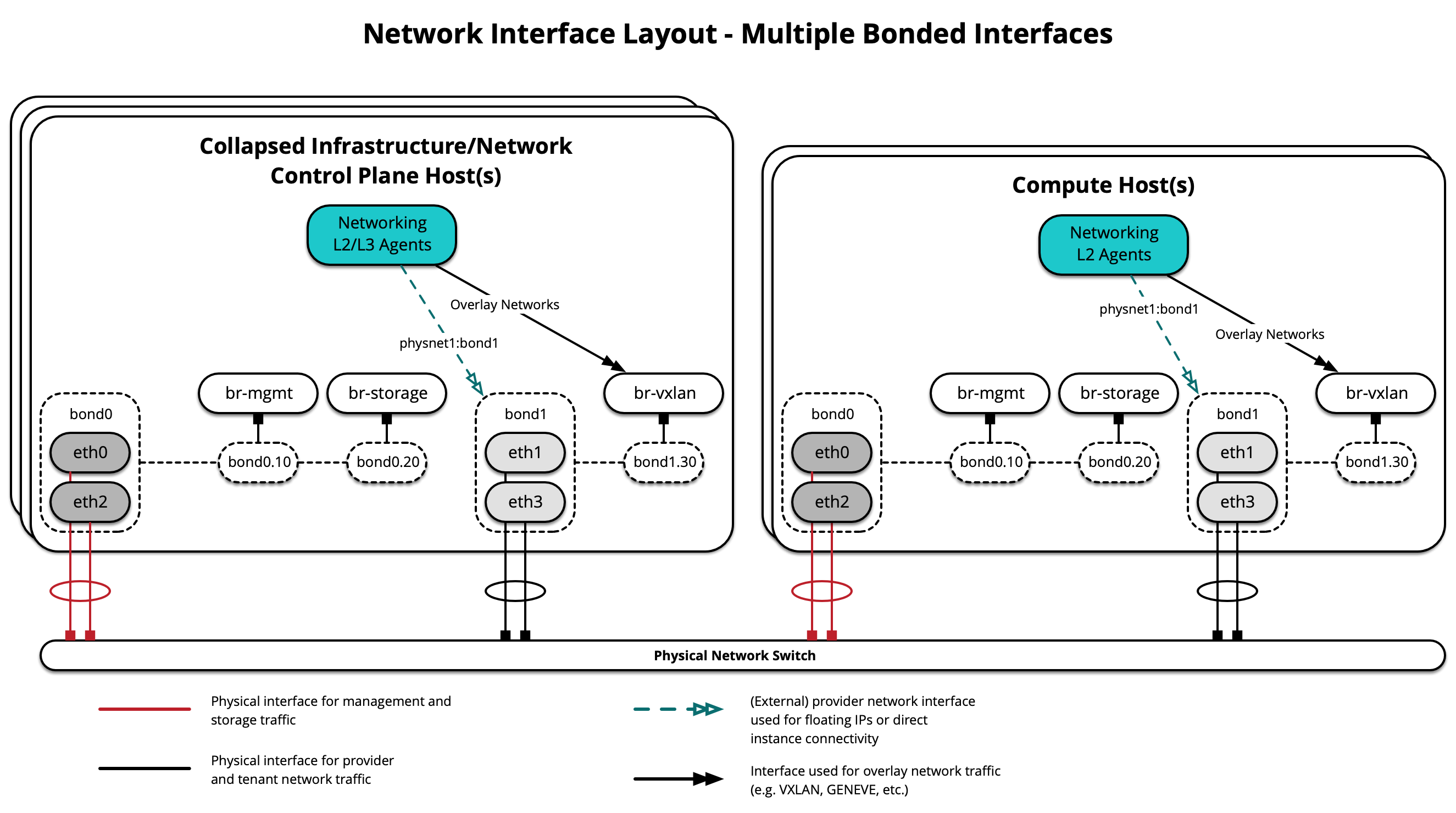

Diagram berikut menunjukkan host menggunakan beberapa ikatan:

Setiap host akan membutuhkan jembatan jaringan yang benar untuk diimplementasikan. Berikut ini adalah file /etc/network/interfaces untuk infra1 menggunakan banyak antarmuka terikat.

Catatan

Jika lingkungan Anda tidak memiliki eth0, tetapi sebaliknya memiliki p1p1 atau nama antarmuka lainnya, pastikan bahwa semua referensi ke eth0 di semua file konfigurasi diganti dengan nama yang sesuai. Hal yang sama berlaku untuk antarmuka jaringan tambahan.

# This is a multi-NIC bonded configuration to implement the required bridges

# for OpenStack-Ansible. This illustrates the configuration of the first

# Infrastructure host and the IP addresses assigned should be adapted

# for implementation on the other hosts.

#

# After implementing this configuration, the host will need to be

# rebooted.

# Assuming that eth0/1 and eth2/3 are dual port NIC's we pair

# eth0 with eth2 and eth1 with eth3 for increased resiliency

# in the case of one interface card failing.

auto eth0

iface eth0 inet manual

bond-master bond0

bond-primary eth0

auto eth1

iface eth1 inet manual

bond-master bond1

bond-primary eth1

auto eth2

iface eth2 inet manual

bond-master bond0

auto eth3

iface eth3 inet manual

bond-master bond1

# Create a bonded interface. Note that the "bond-slaves" is set to none. This

# is because the bond-master has already been set in the raw interfaces for

# the new bond0.

auto bond0

iface bond0 inet manual

bond-slaves none

bond-mode active-backup

bond-miimon 100

bond-downdelay 200

bond-updelay 200

# This bond will carry VLAN and VXLAN traffic to ensure isolation from

# control plane traffic on bond0.

auto bond1

iface bond1 inet manual

bond-slaves none

bond-mode active-backup

bond-miimon 100

bond-downdelay 250

bond-updelay 250

# Container/Host management VLAN interface

auto bond0.10

iface bond0.10 inet manual

vlan-raw-device bond0

# OpenStack Networking VXLAN (tunnel/overlay) VLAN interface

auto bond1.30

iface bond1.30 inet manual

vlan-raw-device bond1

# Storage network VLAN interface (optional)

auto bond0.20

iface bond0.20 inet manual

vlan-raw-device bond0

# Container/Host management bridge

auto br-mgmt

iface br-mgmt inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports bond0.10

address 172.29.236.11

netmask 255.255.252.0

gateway 172.29.236.1

dns-nameservers 8.8.8.8 8.8.4.4

# OpenStack Networking VXLAN (tunnel/overlay) bridge

#

# Nodes hosting Neutron agents must have an IP address on this interface,

# including COMPUTE, NETWORK, and collapsed INFRA/NETWORK nodes.

#

auto br-vxlan

iface br-vxlan inet static

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports bond1.30

address 172.29.240.16

netmask 255.255.252.0

# OpenStack Networking VLAN bridge

#

# The "br-vlan" bridge is no longer necessary for deployments unless Neutron

# agents are deployed in a container. Instead, a direct interface such as

# bond1 can be specified via the "host_bind_override" override when defining

# provider networks.

#

#auto br-vlan

#iface br-vlan inet manual

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

# bridge_ports bond1

# compute1 Network VLAN bridge

#auto br-vlan

#iface br-vlan inet manual

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

#

# Storage bridge (optional)

#

# Only the COMPUTE and STORAGE nodes must have an IP address

# on this bridge. When used by infrastructure nodes, the

# IP addresses are assigned to containers which use this

# bridge.

#

auto br-storage

iface br-storage inet manual

bridge_stp off

bridge_waitport 0

bridge_fd 0

bridge_ports bond0.20

# compute1 Storage bridge

#auto br-storage

#iface br-storage inet static

# bridge_stp off

# bridge_waitport 0

# bridge_fd 0

# bridge_ports bond0.20

# address 172.29.244.16

# netmask 255.255.252.0

Sumber daya tambahan¶

Untuk informasi lebih lanjut tentang cara mengkonfigurasi dengan benar file antarmuka jaringan dan file konfigurasi OpenStack-Ansible untuk berbagai skenario penggunaan, silakan merujuk ke yang berikut:

Untuk agen jaringan dan toplogi jaringan kontainer, silakan merujuk ke yang berikut: